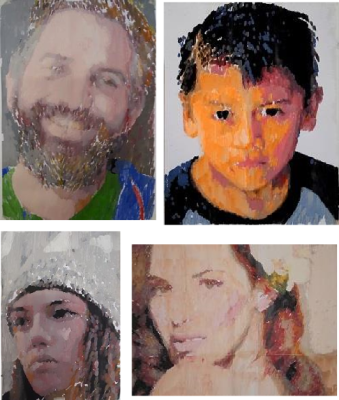

Although the market for hand-drawn portraits largely collapsed following the invention of photography, there’s something magical about watching an artist create a lifelike image using nothing but a pencil, some paper, and their fine motor skills. Watching a machine do the same is a similarly captivating experience, though often the end result is not so great. Trying to fix this deficiency, [Joris Wegner] and [Felix Fisgus] created the Pankraz Piktograph which seems to do a pretty good job at capturing faces. They were inspired by classic picture-drawing automatons, and made a 21st-century version to be used in museums or at events like trade shows.

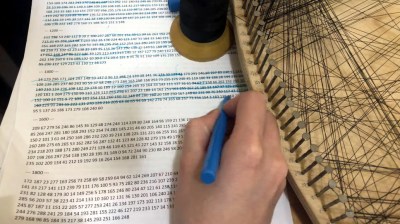

The operation of the Piktograph is very simple: you stand in front of the machine, look into the camera and take a selfie. If you like what you see, the robot will then begin to draw your portrait on a piece of paper. It does this using two human-like arms which are made from aluminium and driven by two stepper motors. An ordinary ballpoint pen is held in a spring-loaded carrier, which provides just enough pen-to-paper pressure to reliably draw lines without lifting off or scratching the paper. We can’t help but be impressed with the overall look of the machine: with a sleek, powder-coated aluminium case and a stainless steel stand it’s a work of art by itself.

Inside, the Piktograph is powered by a Raspberry Pi 3, which runs a rather sophisticated algorithm to generate a vector image which doesn’t take too long to draw, but still results in a recognizable image of the subject. The makers’ thesis goes into quite some detail to explain the process, which uses Canny edge detection to create an outline drawing, then fills in the empty bits to create bright and dark areas. A certain amount of noise and wigglyness is added to the lines to give it a more “handmade” feel, and the resulting drawing is divided into continuous lines for efficient drawing by the plotter.

We’ve seen several types of specialized art robots before, capable of drawing portraits with a pen, painting them, or even using an Etch-a-Sketch, but [Joris] and [Felix]’s creation seems to win on speed, workmanship, and the quality of the end result. Video embedded after the break.

Continue reading “Drawing Robot Creates Portraits Using Pen, Paper And Algorithms”