[P B Richards] and [Aaron Flin] were bemoaning the resource hunger of modern JavaScript environments and planned to produce a system that was much stingier with memory and CPU, that would fit better on lower-end platforms. Think Nginx, NodeJS, and your flavour of database and how much resource that all needs to run properly. Now try wedge that lot onto a Raspberry Pi Zero. Well, they did, creating Rampart: a JavaScript-based complete stack development environment.

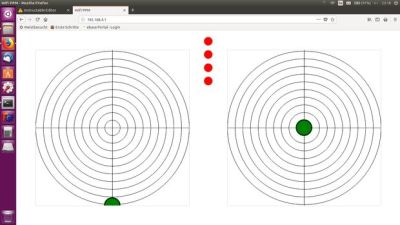

The usual web applications have lots of tricks to optimise for speed, but according to the developers, Rampart is still pretty fast. Its reason for existence is purely about resource usage, and looking at a screen grab, the Rampart HTTP server weighs in at less than 10 MB of RAM. It appears to support a decent slew of technologies, such as HTTPS, WebSockets, SQL search, REDIS, as well as various utility and OS functions, so shouldn’t be so lightweight as to make developing non-trivial applications too much work. One interesting point they make is that in making Rampart so frugal when deployed onto modern server farms it could be rather efficient. Anyway, it may be worth a look if you have a reasonable application to wedge onto a small platform.

We’ve seen many JavaScript runtimes over the years, like this recent effort, but there’s always room for one more.