Piezo elements have the useful property of being bidirectional; that is they can move when you apply electricity to them, but they can also generate electricity when you move them. [Carl] takes advantage of this fact to make buttons that can provide haptic feedback. You can see a video of his efforts below the break.

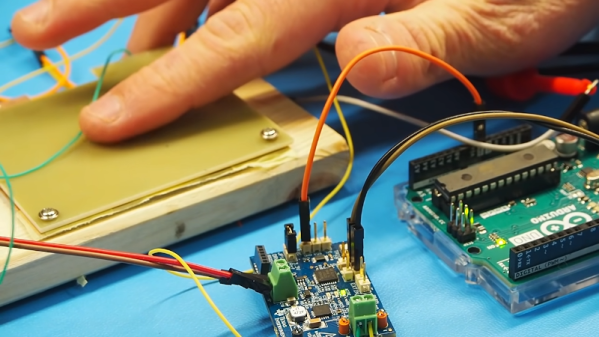

He made two versions of the buttons. One uses a 3D printed housing and the other used a 3D printed spacer in a sandwich configuration. It took a few tries to get it right, as you’ll see. The elements take and produce relatively high voltages, so the bulk of the work was adapting the voltages back and forth. In fact, he even managed to fry his CPU chip with some of the higher voltages involved.

We’d probably look for an easier way to sense the button push, since it seems like a good bit of circuitry just to do that. But the whole circuit provides an input button, haptic feedback, and the option of using the buzzer as a buzzer, so at least it is relatively economical if you need all of those features.