Once upon a time, NASA-JPL put out a design for an open-source rocker-bogie rover. It was an impressive and capable thing, albeit a little expensive and difficult to build. Now, the open source community has dived in and refreshed the design, making it cheaper and more accessible than ever before.

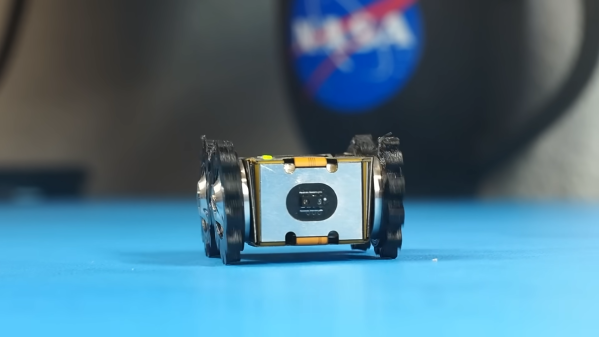

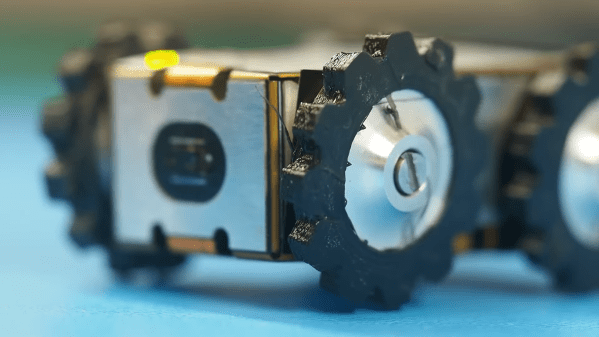

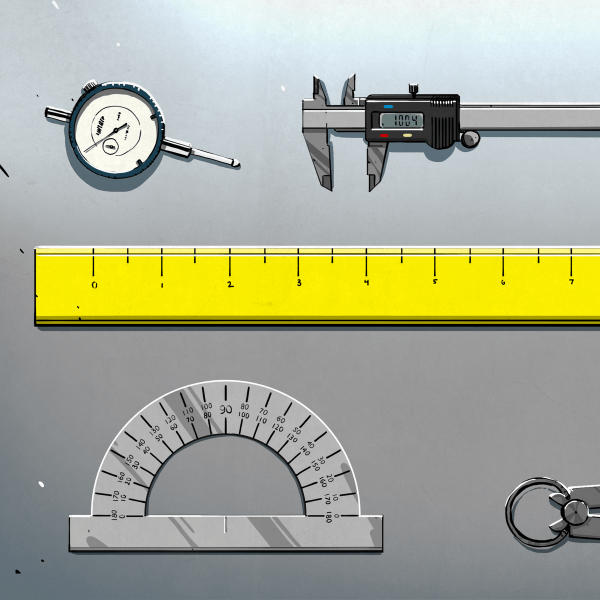

Many parts of the original design have either become prohibitively expensive, gone out of stock, or been discontinued entirely. The new version, developed by the community that formed around the project, focuses on using off-the-shelf parts to bring costs down. Where the original design could cost as much as $3000 to build, the new model slashes that bill almost in half. It also eliminates any need for anything custom fabricated, with no machined or 3D printed parts required.

Other optimizations include cutting the rover’s head out from the basic model, as it’s not necessary for a great deal of applications. There is also better fluid and dust ingress protection, and improved serviceability. The entire rover model can also be loaded in OnShape for those desiring to inspect it or make their own modifications.

Parts lists are on GitHub for those desiring to build their own. Alternatively, check out the original design to learn more. Video after the break.

Continue reading “Open Source Rover Gets An Update For Easier Building”