Ever seen a restaurant where they display fake models of the food on the menu? We never thought much about how shokuhin sampuru — the Japanese name — were made until we watched [Process X]’s video showing a 71-year-old artist creating food models. We aren’t sure what we — or you — would do with this information, but it is a striking process, and there must be something you could do with it. We suggest turning on the English captions, but you’d probably enjoy watching the unusual craftsmanship even with no words.

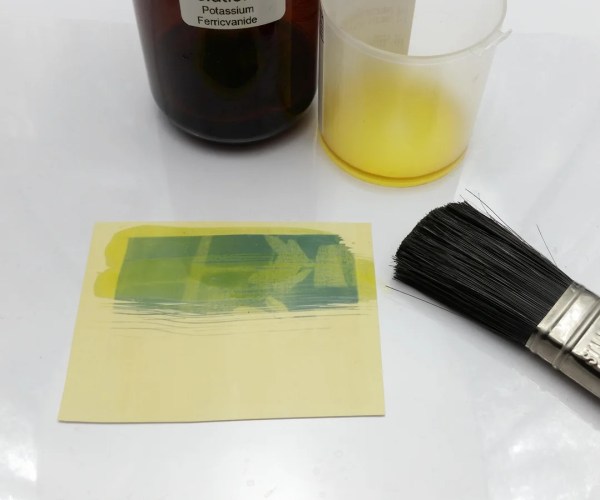

In years past, the food models were primarily made from wax, but since the 1980s, it is more common to use polyvinyl chloride, silicone, and resin. While some factories produce items, sometimes with a mold, single craftsmen like the one in the video still make up the largest part of the market.

We aren’t sure, but we think the material in the video is wax. We couldn’t help but think that some of this could have been 3D printed, but even with the finest resins and resolution, it probably wouldn’t be quite as artistic. We think wax is mainly underutilized in today’s tech. But there are some places it still shows up.