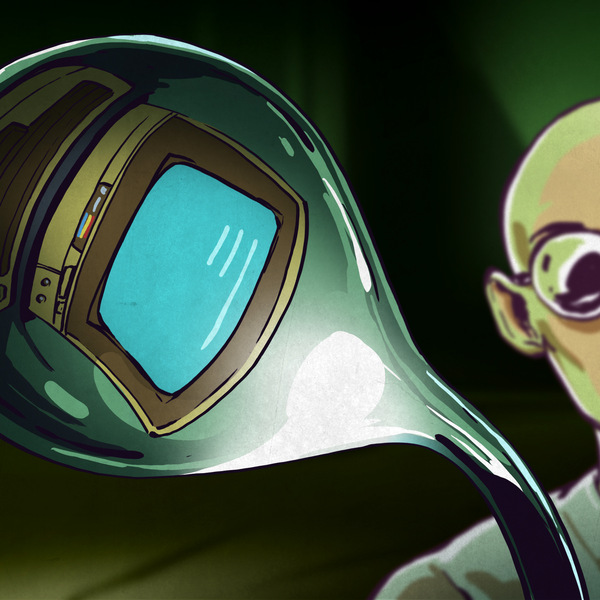

Leap Motion just dropped what may be the biggest tease in Augmented and Virtual Reality since Google Cardboard. The North Star is an augmented reality head-mounted display that boasts some impressive specs:

- Dual 1600×1440 LCDs

- 120Hz refresh rate

- 100 degree FOV

- Cost under $100 (in volume)

- Open Source Hardware

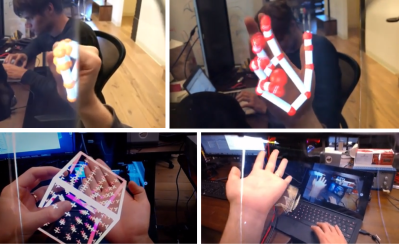

- Built-in Leap Motion camera for precise hand tracking

Yes, you read that last line correctly. The North Star will be open source hardware. Leap Motion is planning to drop all the hardware information next week.

Now that we’ve got you excited, let’s mention what the North Star is not — it’s not a consumer device. Leap Motion’s idea here was to create a platform for developing Augmented Reality experiences — the user interface and interaction aspects. To that end, they built the best head-mounted display they could on a budget. The company started with standard 5.5″ cell phone displays, which made for an incredibly high resolution but low framerate (50 Hz) device. It was also large and completely unpractical.

Now that we’ve got you excited, let’s mention what the North Star is not — it’s not a consumer device. Leap Motion’s idea here was to create a platform for developing Augmented Reality experiences — the user interface and interaction aspects. To that end, they built the best head-mounted display they could on a budget. The company started with standard 5.5″ cell phone displays, which made for an incredibly high resolution but low framerate (50 Hz) device. It was also large and completely unpractical.

The current iteration of the North Star uses much smaller displays, which results in a higher frame rate and a better overall experience. The secret sauce seems to be Leap’s use of ellipsoidal mirrors to achieve a large FOV while maintaining focus.

We’re excited, but also a bit wary of the $100 price point — Leap Motion is quick to note that the price is “in volume”. They also mention using diamond tipped tooling in a vibration isolated lathe to grind the mirrors down. If Leap hasn’t invested in some injection molding, those parts are going to make the whole thing expensive. Keep your eyes on the blog here for more information as soon as we have it!