If your old camera’s WiFi picture upload feature breaks, what do you do? Begrudgingly get a new one? Well, if you’re like [Ge0rg], you break out Ghidra and find the culprit. He’s been hacking on Samsung’s connected cameras for a fair bit now, and we’ve covered his adventures hacking on Samsung’s Linux-powered camera series throughout the last decade, from getting root on them for fun, to deep dives into the series. Now, it was time to try and fix a problem with one particular camera, Samsung WB850F, which had its picture upload feature break at some point.

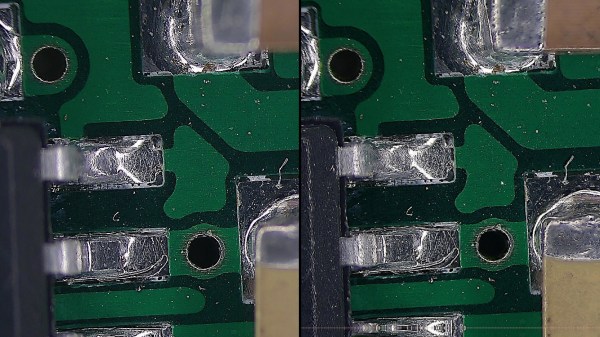

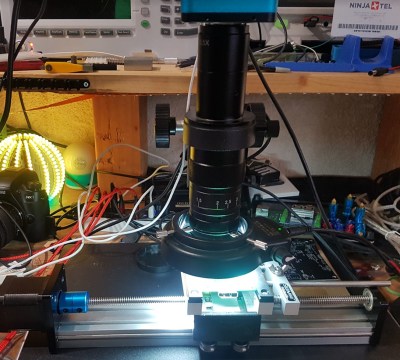

[Ge0rg] grabbed a firmware update .zip, and got greeted by a bunch of compile-time debug data as a bonus, making the reverse-engineering journey all that more tempting. After figuring out the update file partition mapping, loading the code into Ghidra, and feeding the debug data into it to get functions to properly parse, he got to the offending segment, and eventually figured out the bug. Turned out, a particularly blunt line of code checking the HTTP server response was confused by s in https, and a simple spoof server running on a device of your choice with a replacement hosts file is enough to have the feature work again, well, paired with a service that spoofs the long-shutdown Samsung’s picture upload server.

Turned out, a bunch more cameras from Samsung had the same check misfire for them, which made this reverse-engineering journey all that more fruitful. Once again, Ghidra skills save the day.