How often does this happen to you? You find yourself describing something that happened in a game to someone, and they’re not sure they know what part of the map you’re talking about, or they’ve never gotten that far. Wouldn’t it be cool to make a bookmark in a video game so you can jump right to the beginning of the action and show your friend what you mean using the actual game?

That’s the idea behind [Joël Franusic] and [Adam Smith]’s fantastic Playable Quotes for Game Boy — clip-making that creates a 4-D nugget of gameplay that can either be viewed as a video, or played live within the bounds of the clip. The system is built on a modified version of the PyBoy emulator.

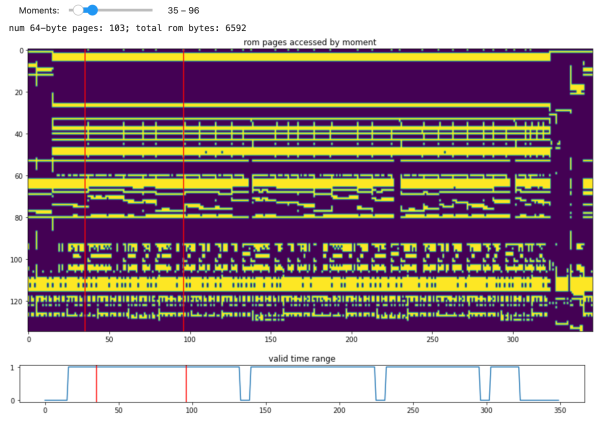

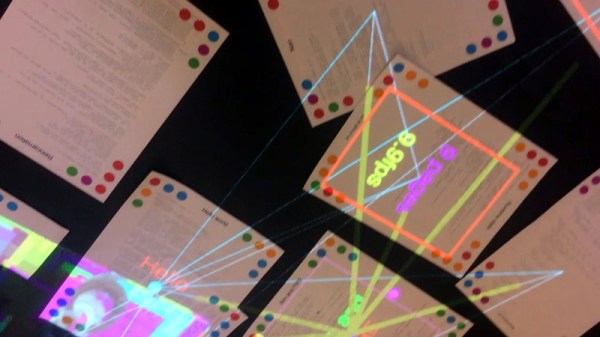

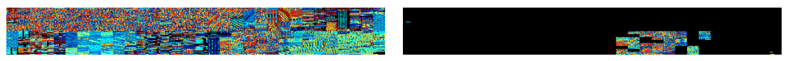

Basically, a Playable Quote is made up of a save state and all that entails, plus a slice of the game’s ROM that includes just enough game data to recreate an interactive clip. Everything is zipped up and steganographically encoded into a PNG file. Here’s a Tetris quote you can play (or watch) right now — you might recognize it from the post thumbnail. You’ll find the others on the games site, which allows people to create and share and build on each other’s work.

There’s so much more that can be done with this type of immersive and interactive tool outside the realm of games, and we’re excited to see where this leads and what people do with it.

Haven’t heard of PyBoy before? Let us introduce you.