If you want to analyze an antenna, you can use simulation software or you can build an antenna and make measurements. [All Electroncs Channel] does both and show you how you can do it, too, in the video below.

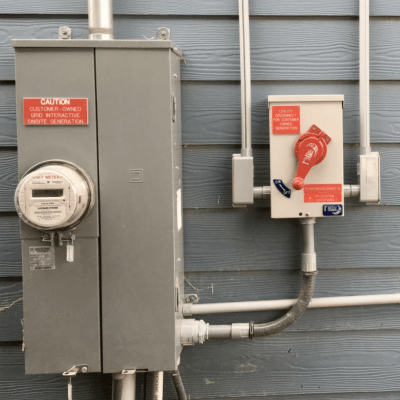

The antenna in question is a loop antenna. He uses a professional VNA (Vector Network Analyzer) but you could get away with a hobby-grade VNA, too. The software for simulation is 4NEC2.

The VNA shows the electrical characteristics of the antenna, which is one of the things you can pull from the simulation software. You can also get a lot of other information. You’d need to use a field strength meter or something similar to get some of the other information in the real world.

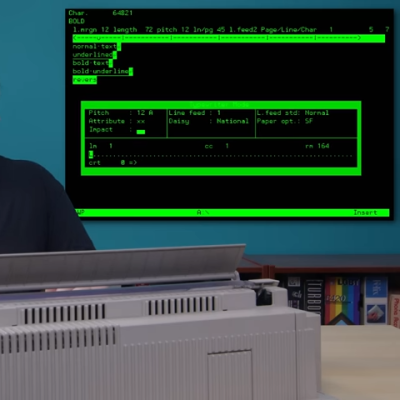

The antenna simulation software is a powerful engine and 4NEC2 gives you an easy way to use it with a GUI. You can see all the graphs and plots easily, too. Unfortunately, it is Windows software, but we hear it will run under Wine.

The practical measurement is a little different from the simulation, often because the simulation is perfect and the real antenna has non-ideal elements. [Grégory] points out that changing simulation parameters is a great way to develop intuition about — in this case — antennas.

Want to dive into antennas? We can help with that. Or, you can start with a simple explanation.

Continue reading “Antenna Measurement In Theory And Practice”

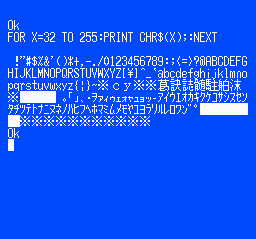

removable cartridge, complete with a BASIC interpreter and a collection of graphical editor tools for game creation.

removable cartridge, complete with a BASIC interpreter and a collection of graphical editor tools for game creation. even a map editor. We think inputting BASIC code via a gamepad would get old fast, but it would work a little better for graphical editing.

even a map editor. We think inputting BASIC code via a gamepad would get old fast, but it would work a little better for graphical editing.