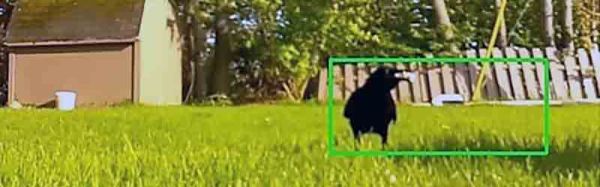

[Scott] has a neat little closet in his carport that acts as a shelter and rest area for their outdoor cat, Rory. She has a bed and food and water, so when she’s outside on an adventure she has a place to eat and drink and nap in case her humans aren’t available to let her back in. However, [Scott] recently noticed that they seemed to be going through a lot of food, and they couldn’t figure out where it was going. Kitty wasn’t growing a potbelly, so something else was eating the food.

So [Scott] rolled up his sleeves and hacked together an OpenCV project with a FLIR Boson to try and catch the thief. To reduce the amount of footage to go through, the system would only capture video when it detected movement or a large change in the scene. It would then take snapshots, timestamp them, and optionally record a feed of the video. [Scott] originally started writing the system in Python, but it couldn’t keep up and was causing frames to be dropped when motion was detected. Eventually, he re-wrote the prototype in C++ which of course resulted in much better performance!

Five years later, I joined a hackerspace, and eventually found out that its CCTV cameras, while being quite visually prominent, stopped functioning a long time ago. At that point, I was in a position to do something about it, and I built an entire CCTV network around a software package called

Five years later, I joined a hackerspace, and eventually found out that its CCTV cameras, while being quite visually prominent, stopped functioning a long time ago. At that point, I was in a position to do something about it, and I built an entire CCTV network around a software package called

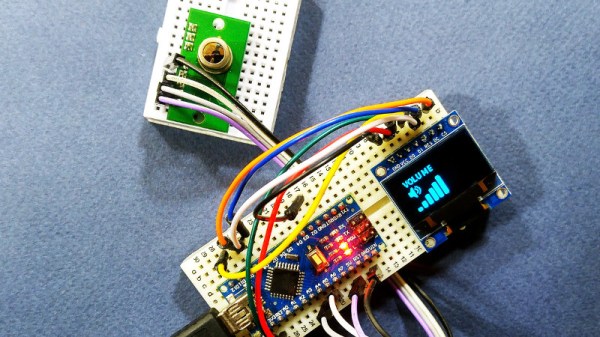

The project uses the TPA81 8-pixel thermopile array which detects the change in heat levels from 8 adjacent points. An Arduino reads these temperature points over I2C and then a simple thresholding function is used to detect the movement of the fingers. These movements are then used to do a number of things including turn the volume up or down as shown in the image alongside.

The project uses the TPA81 8-pixel thermopile array which detects the change in heat levels from 8 adjacent points. An Arduino reads these temperature points over I2C and then a simple thresholding function is used to detect the movement of the fingers. These movements are then used to do a number of things including turn the volume up or down as shown in the image alongside.