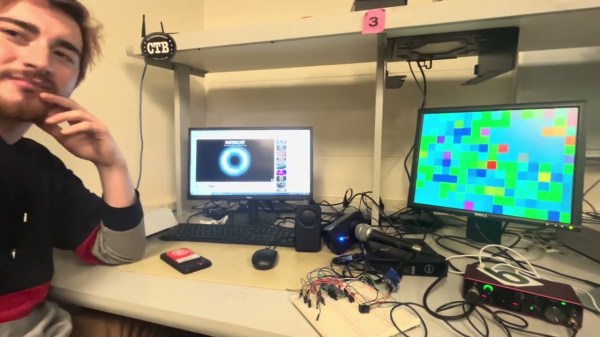

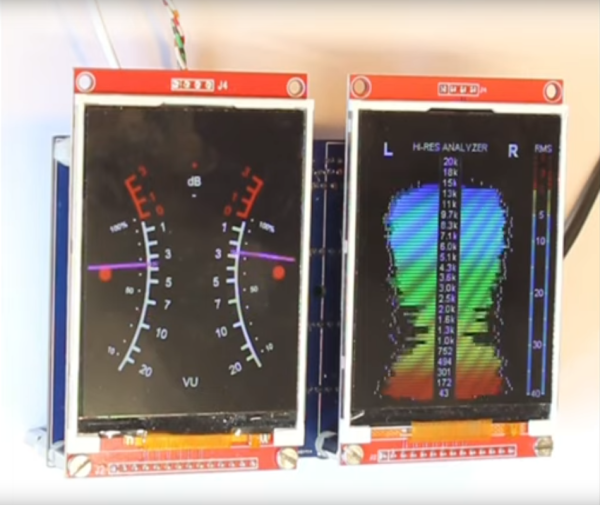

Visualizers used to be very much in vogue, something you’d gasp in at amazement when you’d fire up Winamp or Windows Media Player. They’re largely absent from our modern lives, but [Arnov Sharma] is bringing them back. After all, who doesn’t want a cool visualizer hanging on the wall in their living room?

The build is based around the Raspberry Pi Pico 2. It’s paired with a small microphone hooked up to a MAX9814 chip, which amplifies the signal and offers automatic gain control to boot. This is a particularly useful feature, which allows the microphone to pick up very soft and very loud sounds without the output clipping. The Pi Pico 2 picks up the signals from the mic, and then displays the waveforms on a 64 x 32 HUB75 RGB matrix. It’s a typical scope-type display, which allows one to visualize the sound waves quite easily. [Arnov] demonstrates this by playing tones on a guitar, and it’s easy to see the corresponding waveforms playing out on the LED screen.

It’s a fun project, and it’s wrapped up in a slick 3D printed housing. This turns the visualizer into a nice responsive piece of wall art that would suit any hacker’s decor. We’ve featured some other great visualizers before, too. Continue reading “Building A Wall-Mounted Sound Visualizer”