Virtual Reality always seemed like a technology just out of reach, much like nuclear fusion, the flying car, or Linux on the desktop. It seems to be gaining steam in the last five years or so, though, with successful video games from a number of companies as well as plenty of other virtual reality adjacent technology that seems to be picking up steam as well like augmented reality. Another sign that this technology might be here to stay is this virtual reality headset made for mice. Continue reading “Mice Play In VR”

virtual reality132 Articles

Immersive Virtual Reality From The Humble Webcam

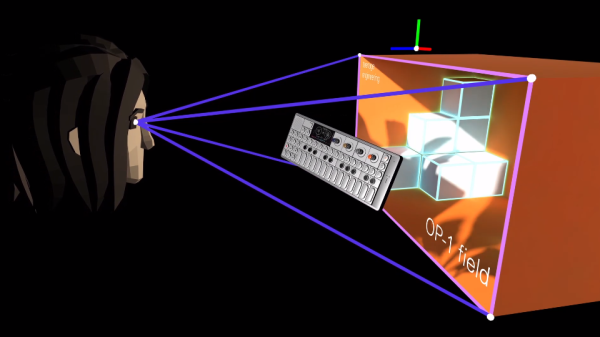

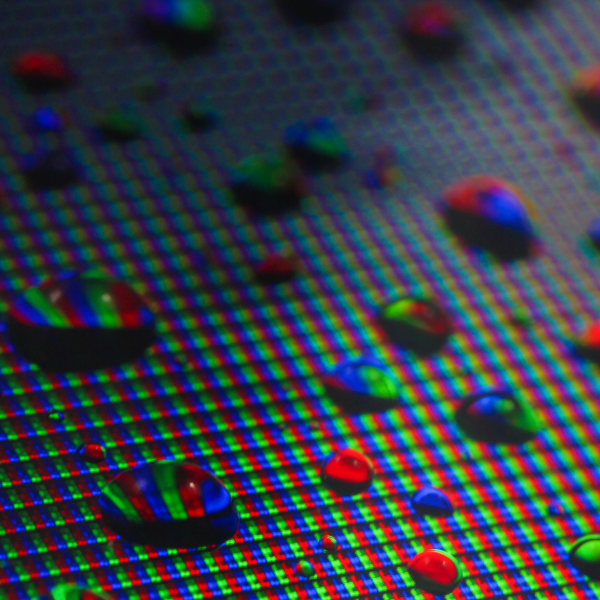

[Russ Maschmeyer] and Spatial Commerce Projects developed WonkaVision to demonstrate how 3D eye tracking from a single webcam can support rendering a graphical virtual reality (VR) display with realistic depth and space. Spatial Commerce Projects is a Shopify lab working to provide concepts, prototypes, and tools to explore the crossroads of spatial computing and commerce.

The graphical output provides a real sense of depth and three-dimensional space using an optical illusion that reacts to the viewer’s eye position. The eye position is used to render view-dependent images. The computer screen is made to feel like a window into a realistic 3D virtual space where objects beyond the window appear to have depth and objects before the window appear to project out into the space in front of the screen. The resulting experience is like a 3D view into a virtual space. The downside is that the experience only works for one viewer.

Eye tracking is performed using Google’s MediaPipe Iris library, which relies on the fact that the iris diameter of the human eye is almost exactly 11.7 mm for most humans. Computer vision algorithms in the library use this geometrical fact to efficiently locate and track human irises with high accuracy.

Generation of view-dependent images based on tracking a viewer’s eye position was inspired by a classic hack from Johnny Lee to create a VR display using a Wiimote. Hopefully, these eye-tracking approaches will continue to evolve and provide improved motion-responsive views into immersive virtual spaces.

VR Sickness: A New, Old Problem

Have you ever experienced dizziness, vertigo, or nausea while in a virtual reality experience? That’s VR sickness, and it’s a form of motion sickness. It is not a completely solved problem, and it affects people differently, but it all comes from the same root cause, and there are better and worse ways of dealing with it.

If you’ve experienced a sudden onset of VR sickness, it was most likely triggered by flying, sliding, or some other kind of movement in VR that caused a strong and sudden feeling of vertigo or dizziness. Or perhaps it was not sudden, and was more like a vague unease that crept up, leaving you nauseated and unwell.

Just like car sickness or sea sickness, people are differently sensitive. But the reason it happens is not a mystery; it all comes down to how the human body interprets and reacts to a particular kind of sensory mismatch.

Why Does It Happen?

The human body’s vestibular system is responsible for our sense of balance. It is in turn responsible for many boring, but important, tasks such as not falling over. To fulfill this responsibility, the brain interprets a mix of sensory information and uses it to build a sense of the body, its movements, and how it fits in to the world around it.

These sensory inputs come from the inner ear, the body, and the eyes. Usually these inputs are in agreement, or they disagree so politely that the brain can confidently make a ruling and carry on without bothering anyone. But what if there is a nontrivial conflict between those inputs, and the brain cannot make sense of whether it is moving or not? For example, if the eyes say the body is moving, but the joints and muscles and inner ear disagree? The result of that kind of conflict is to feel sick.

Common symptoms are dizziness, nausea, sweating, headache, and vomiting. These messy symptoms are purposeful, for the human body’s response to this particular kind of sensory mismatch is to assume it has ingested something poisonous, and go into a failure mode of “throw up, go lie down”. This is what is happening — to a greater or lesser degree — by those experiencing VR sickness.

2022 Cyberdeck Contest: Cyberpack VR

Feeling confined by the “traditional” cyberdeck form factor, [adam] decided to build something a little bigger with his Cyberpack VR. If you’ve ever dreamed of being a WiFi-equipped porcupine, then this is the cyberdeck you’ve been waiting for.

Craving the upgradability and utility of a desktop in a more portable format, [adam] took an old commuter backpack and squeezed in a Windows 11 PC, Raspberry Pi, multiple wifi networks, an ergonomic keyboard, a Quest VR headset, and enough antennas to attract the attention of the FCC. The abundance of network hardware is due to [adam]’s “new interest: a deeper understanding of wifi, and control of my own home network even if my teenage kids become hackers.”

The Quest is setup to run multiple virtual displays via Immersed, and you can relax on the couch while leaving the bag on the floor nearby with the extra long umbilical. One of the neat details of this build is repurposing the bag’s external helmet mount to attach the terminal unit when not in use. Other details we love are the toggle switches and really integrated look of the antenna connectors and USB ports. The way these elements are integrated into the bag makes it feel borderline organic – all the better for your cyborg chic.

For more WiFi backpacking goodness you may be interested in the Pwnton Pack. We’ve also covered other non-traditional cyberdecks including the Steampunk Cyberdeck and the Galdeano. If you have your own cyberdeck, you have until September 30th to submit it to our 2022 Cyberdeck Contest!

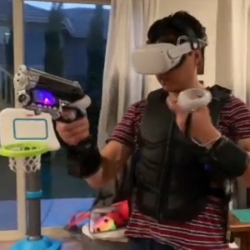

DIY Haptic-Enabled VR Gun Hits All The Targets

This VR Haptic Gun by [Robert Enriquez] is the result of hacking together different off-the-shelf products and tying it all together with an ESP32 development board. The result? A gun frame that integrates a VR controller (meaning it can be tracked and used in VR) and provides mild force feedback thanks to a motor that moves with each shot.

But that’s not all! Using the WiFi capabilities of the ESP32 board, the gun also responds to signals sent by a piece of software intended to drive commercial haptics hardware. That software hooks into the VR game and sends signals over the network telling the gun what’s happening, and [Robert]’s firmware acts on those signals. In short, every time [Robert] fires the gun in VR, the one in his hand recoils in synchronization with the game events. The effect is mild, but when it comes to tactile feedback, a little can go a long way.

But that’s not all! Using the WiFi capabilities of the ESP32 board, the gun also responds to signals sent by a piece of software intended to drive commercial haptics hardware. That software hooks into the VR game and sends signals over the network telling the gun what’s happening, and [Robert]’s firmware acts on those signals. In short, every time [Robert] fires the gun in VR, the one in his hand recoils in synchronization with the game events. The effect is mild, but when it comes to tactile feedback, a little can go a long way.

The fact that this kind of experimentation is easily and affordably within the reach of hobbyists is wonderful, and VR certainly has plenty of room for amateurs to break new ground, as we’ve seen with projects like low-cost haptic VR gloves.

[Robert] walks through every phase of his gun’s design, explaining how he made various square pegs fit into round holes, and provides links to parts and resources in the project’s GitHub repository. There’s a video tour embedded below the page break, but if you want to jump straight to a demonstration in Valve’s Half-Life: Alyx, here’s a link to test firing at 10:19 in.

There are a number of improvements waiting to be done, but [Robert] definitely understands the value of getting something working, even if it’s a bit rough. After all, nothing fills out a to-do list or surfaces hidden problems like a prototype. Watch everything in detail in the video tour, embedded below.

Continue reading “DIY Haptic-Enabled VR Gun Hits All The Targets”

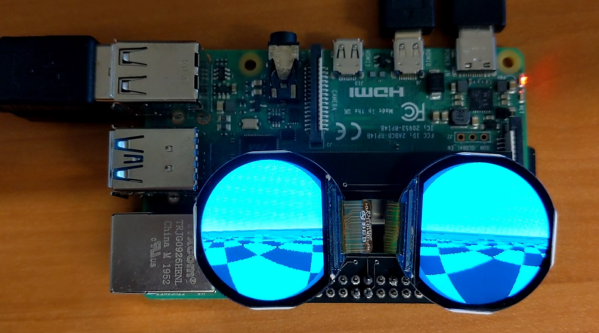

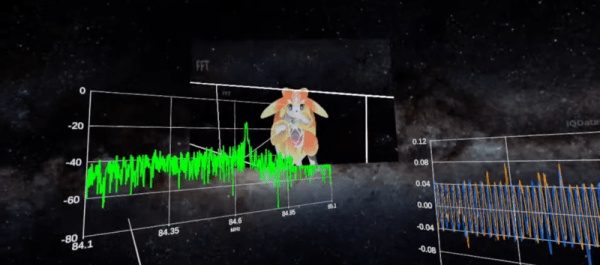

VR Spectrum Analyzer

At one point or another, we’ve probably all wished we had a VR headset that would allow us to fly around our designs. While not quite the same, thing, [manahiyo831] has something that might even be better: a VR spectrum analyzer. You can get an idea of what it looks like in the video below, although that is actually from an earlier version.

The video shows a remote PC using an RTL dongle to pick up signals. The newer version runs on the Quest 2 headset, so you can simply attach the dongle to the headset. Sure, you’d look like a space cadet with this on, but — honestly — if you are willing to be seen in the headset, it isn’t that much more hardware.

What we’d really like to see, though, is a directional antenna so you could see the signals in the direction you were looking. Now that would be something. As it is, this is undeniably cool, but we aren’t sure what its real utility is.

What other VR test gear would you like to see? A Tron-like logic analyzer? A function generator that lets you draw waveforms in the air? A headset oscilloscope? Or maybe just a giant workbench in VR?

A spectrum analyzer is a natural project for an SDR. Or things that have SDRs in them.

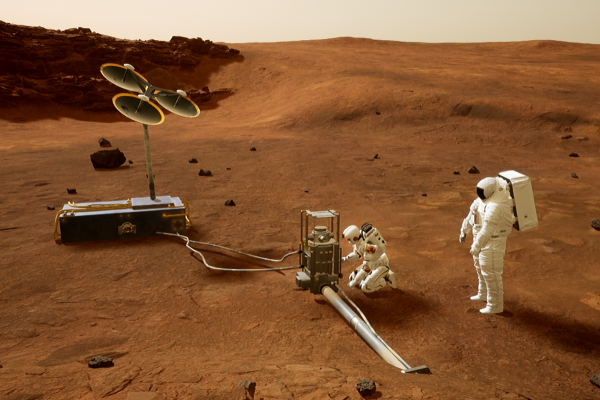

Can You Help NASA Build A Mars Sim In VR?

No matter your project or field of endeavor, simulation is a useful tool for finding out what you don’t know. In many cases, problems or issues aren’t obvious until you try and do something. Where doing that thing is expensive or difficult, a simulation can be a low-stakes way to find out some problems without huge costs or undue risks.

Going to Mars is about as difficult and expensive as it gets. Thus, it’s unsurprising that NASA relies on simulations in planning its missions to the Red Planet. Now, the space agency is working to create a Mars sim in VR for training and assessment purposes. The best part is that you can help!

Continue reading “Can You Help NASA Build A Mars Sim In VR?”