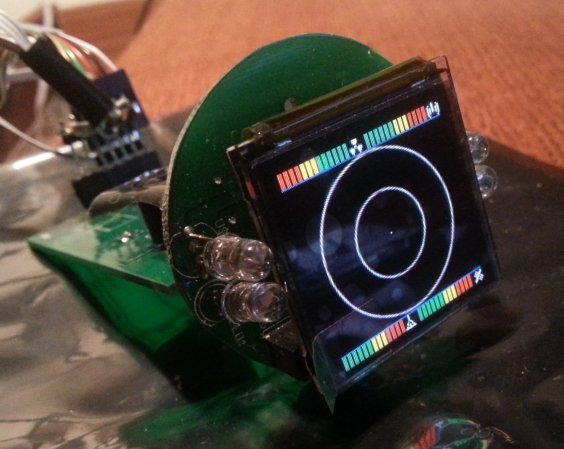

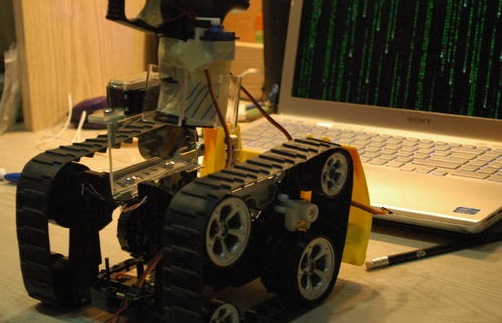

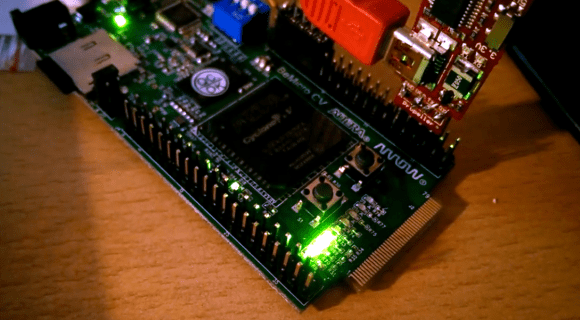

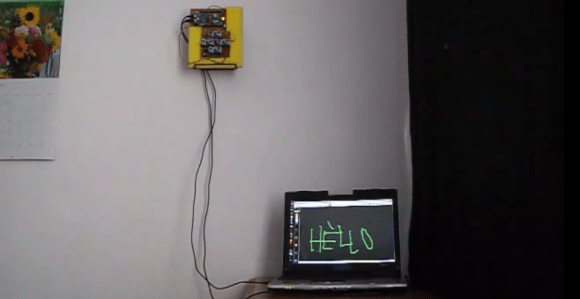

Producing items onto a screen simply by touching the air is a marvelous thing. One way to accomplish this involves four HC-SR04 ultrasonic sensor units that transmit data through an Arduino into a Linux computer. The end result is a virtual touchscreen that can be made at home.

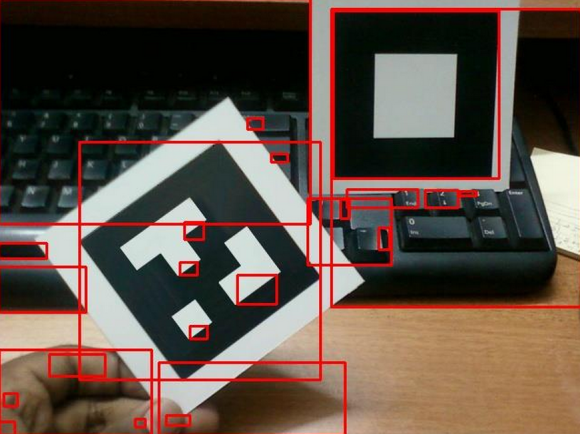

The software of this device was developed by [Anatoly] who translated hand gestures into actionable commands. The sensors attached to the Arduino had an approximate scanning range of 3m, and the ultrasonic units were modified to broadcast an analog signal at 40 kHz. There were a few limitations with the original hardware design as [Anatoly] stated in the post. For example, at first, only one unit was transmitting at a time, so there was no way the Arduino could identify two objects on the same sphere. However, [Anatoly] updated the blog with a 2nd post showing that sensing multiple items at once could be done. Occasionally, the range would be finicky when dealing with small items like pens. But besides that, it seemed to work pretty well.

Additional technical specifications can be found on [Anatoly]’s blog and videos of the system working can be seen after the break.

Continue reading “A Virtual Touchscreen (3D Ultrasonic Radar)”