Ever think about all the moving parts involving a big KiCad project going into production? You need to provide manufacturer documentation, assembly instructions and renders for them to reference, every output file they could want, and all of it has to always stay up to date. [Vincent Nguyen] has a software pipeline to create all the files and documentation you could ever want upon release – with an extensive installation and usage guide, helping you turn your KiCad projects truly production-grade.

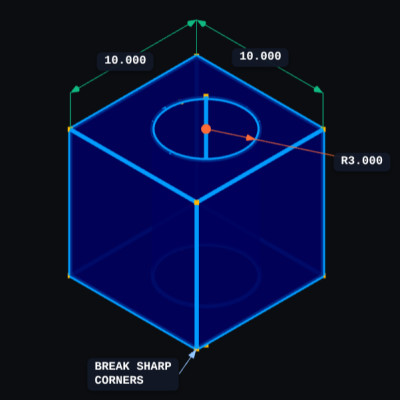

This KiBot-based project template has no shortage of features. It generates assembly documents with custom processing for a number of production scenarios like DNPs, stackup and drill tables, fab notes, it adds features like table of contents and 3D renders into KiCad-produced documents as compared to KiCad’s spartan defaults, and it autogenerates all the outputs you could want – from Gerbers, .step and BOM files, to ERC/DRC reports and visual diffs.

This pipeline is Github-tailored, but it can also be run locally, and it works wonderfully for those moments when you need to release a PCB into the wild, while making sure that the least amount of things possible can go wrong during production. With all the features, it might take a bit to get used to. Don’t need fully-featured, just some GitHub page images? Use this simple plugin to auto-add render images in your KiCad repositories, then.

Continue reading “Production KiCad Template Covers All Your Bases”