A popular expression in the Linux forums nowadays is noting that someone “uses Arch btw”, signifying that they have the technical chops to install and use Arch Linux, a distribution designed to be cutting edge but that also has a reputation of being for advanced users only. Whether this meme was originally posted seriously or was started as a joke at the expense of some of the more socially unaware Linux users is up for debate. Either way, while it is true that Arch can be harder to install and configure than something like Debian or Fedora, thanks to excellent documentation and modern (but optional) install tools it’s no longer that much harder to run than either of these popular distributions.

For my money, the true mark of a Linux power user is the ability to install and configure Gentoo Linux and use it as a daily driver or as a way to breathe life into aging hardware. Gentoo requires much more configuration than any mainline distribution outside of things like Linux From Scratch, and has been my own technical white whale for nearly two decades now. I was finally able to harpoon this beast recently and hope that my story inspires some to try Gentoo while, at the same time, saving others the hassle.

A Long Process, in More Ways Than One

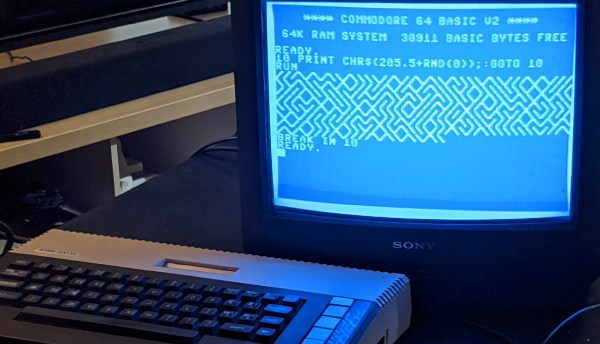

My first experience with Gentoo was in college at Clemson University in the late ’00s. The computing department there offered an official dual-boot image for any university-supported laptop at the time thanks to major effort from the Clemson Linux User Group, although the image contained the much-more-user-friendly Ubuntu alongside Windows. CLUG was largely responsible for helping me realize that I had options outside of Windows, and eventually I moved completely away from it and began using my own Linux-only installation. Being involved in a Linux community for the first time had me excited to learn about Linux beyond the confines of Ubuntu, though, and I quickly became the type of person featured in this relevant XKCD. So I fired up an old Pentium 4 Dell desktop that I had and attempted my first Gentoo installation.

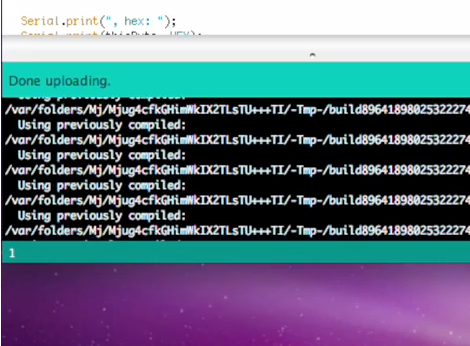

For the uninitiated, the main thing that separates Gentoo from most other distributions is that it is source-based, meaning that users generally must compile the source code for all the software they want to use on their own machines rather than installing pre-compiled binaries from a repository. So, for a Gentoo installation, everything from the bootloader to the kernel to the desktop to the browser needs to be compiled when it is installed. This can take an extraordinary amount of time especially for underpowered machines, although its ability to customize compile options means that the ability to optimize software for specific computers will allow users to claim that time back when the software is actually used. At least, that’s the theory. Continue reading “I Installed Gentoo So You Don’t Havtoo”