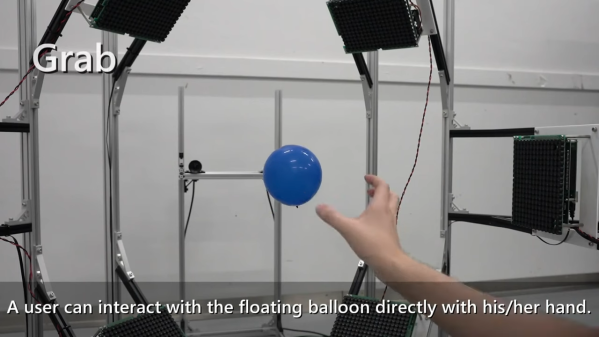

We’ve seen all kinds of interfaces come and go over the years, from keyboards and mice to lightpens and touchscreens. Now, a group of researchers at the University of Tokyo have built a device that enables haptic interaction with a balloon.

It takes quite a rig to achieve this feat. A vaguely-spherical frame is used, which mounts eleven airborne ultrasound phased arrays, or AUPA. Each phased array is made up of many ultrasonic transducers, with the machine having 2739 individual transducers in total. The phased arrays are controlled in such a way to create a sound field that moves the balloon around and holds it in various desired positions. Closed loop control is achieved with the use of stereo cameras, which track the balloon’s position at high speed.

The system allows the balloon to be moved around quickly in three dimensions. Plus, a user can touch and interact with the balloon directly as it floats in mid-air. They can even drag and redirect the balloon, which can be tracked by the stereo camera system.

The research team don’t highlight any particular applications for this technology at this stage. We’re not expecting the Touch Balloon on next year’s Surface Pro or the next MacBook, that’s for sure. However, it’s great fun to look at and likely has some creative applications that we can’t think of off the top of our heads. Share yours in the comments.

The 2022 Hackaday Prize has a special focus on odd inputs and peculiar peripherals, so be sure to check out that whole scene. Video after the break.

Continue reading “Balloons Are The User Interface Of The Future”