With all of the various keyboards, mouses (mice?), and other human interface devices (HID) available for our computers, there’s no possible way for developers to anticipate every type of input for every piece of software they build. Most of the time everything will work fine as long as some basic standards are kept, both from the hardware and software sides, but that’s not always the case. [Losso] noticed a truly terrible volume control method when visiting certain websites while also using a USB volume knob, and used this quirk to build a Breakout game with it.

It turns out his volume control knob would interact simultaneously with certain video players’ built-in volume control and the system volume for the operating system, leading to a number of undesirable conditions. However, the fact that this control is built in to certain browsers in the first place led to this being the foundation for the Breakout clone [Losso] is calling KNOB-OUT. Unlike volume buttons on something like a multimedia keyboard, the USB volume control knob can be configured much more easily to account for acceleration, making it more faithful to the original arcade version of the game. The game itself is coded in JavaScript with the source code available right in the browser.

If you’d like to play [Losso]’s game here’s a direct link to it although sometimes small web-based projects like these tend to experience some slowdown when they first get posted here. And, if you’re looking for some other games to play in a browser like it’s the mid-00s again, we’re fans of this project which brings the unofficial Zelda game Zelda Classic to our screens.

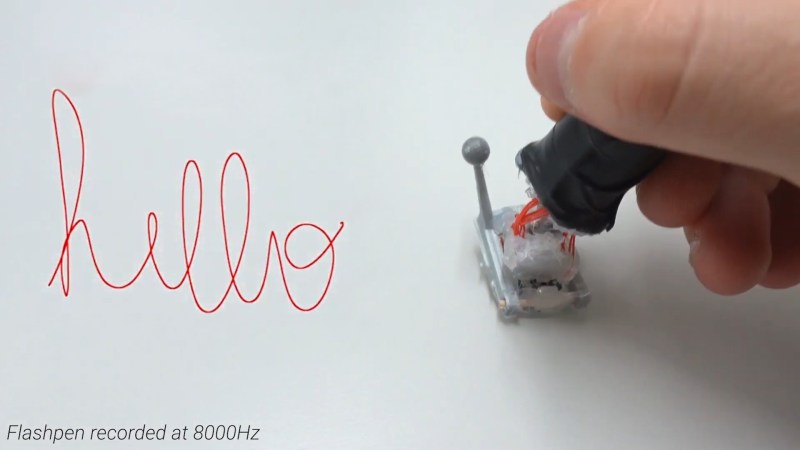

The fundamental technology behind the pen is simple, with the device using an optical flow sensor harvested from a high-end gaming mouse. This is a device that uses an image sensor to detect the motion of the sensor itself across a surface. Working at an update rate of 8 KHz, it eclipses other devices in the market from manufacturers such as Wacom that typically operate at rates closer to 200Hz. The optical sensor is mounted to a plastic joint that allows the user to hold the pen at a natural angle while keeping the sensor parallel to the writing surface. There’s also a reflective sensor on the pen tip which allows cameras to track its position in space, for use in combination with VR technology.

The fundamental technology behind the pen is simple, with the device using an optical flow sensor harvested from a high-end gaming mouse. This is a device that uses an image sensor to detect the motion of the sensor itself across a surface. Working at an update rate of 8 KHz, it eclipses other devices in the market from manufacturers such as Wacom that typically operate at rates closer to 200Hz. The optical sensor is mounted to a plastic joint that allows the user to hold the pen at a natural angle while keeping the sensor parallel to the writing surface. There’s also a reflective sensor on the pen tip which allows cameras to track its position in space, for use in combination with VR technology.