Increasingly these days drones are being used for urban surveillance, delivery, and examining architectural structures. To do this autonomously often involves using “map-localize-plan” techniques wherein first, the location is determined on a map using GPS, and then based on that, control commands are produced.

A neural network that does steering and collision prediction can compliment the map-localize-plan techniques. However, the neural network needs to be trained using video taken from actual flying drones. But generating that training video involves many hours of flying drones at street level putting vehicles and pedestrians at risk. To train their DroNet, Researchers from the University of Zurich and the Universidad Politecnica de Madrid have come up with safer sources for that video, video recorded from driving cars and bicycles.

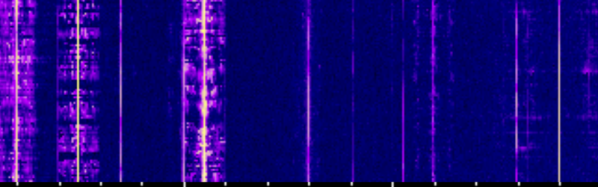

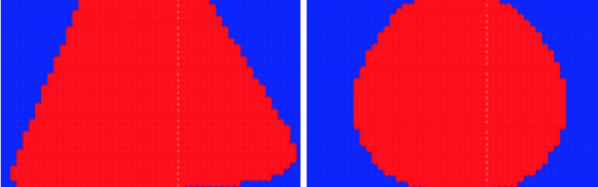

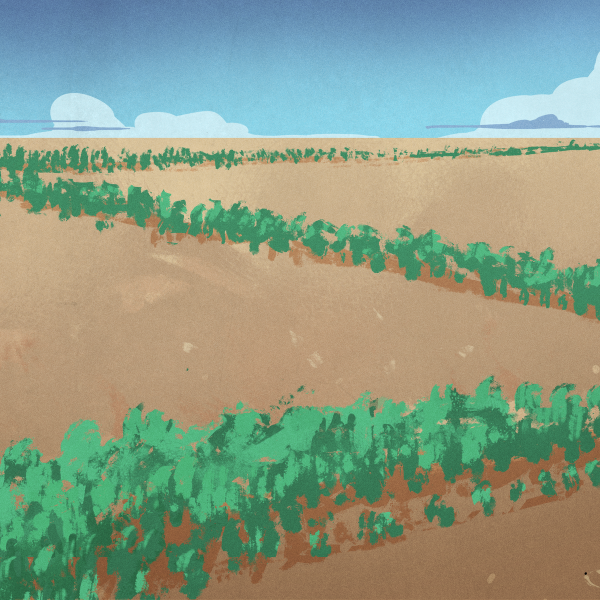

For the drone steering predictions, they used over 70,000 images and corresponding steering angles from the publically available car driving data from Udacity’s Open Source Self-Driving project. For the collision predictions, they mounted a GoPro camera to the handlebars of a bicycle and drove around a city. Video recording began when the bicycle was distant from an object and stopped when very close to the object. In total, they collected 32,000 images.

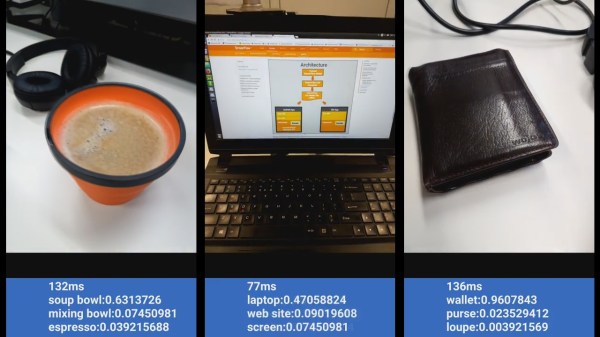

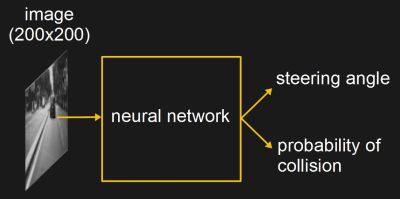

To use the trained network, images from the drone’s forward-facing camera were fed into the network and the output was a steering angle and a probability of collision, which was turned into a velocity. The drone remained at a constant height above ground, though it did work well from 1.5 meters to 5 meters up. It successfully navigated road lanes and avoided moving pedestrians and bicycles. Intersections did confuse it though, likely due to the open spaces messing with the collision predictions. But we think that shouldn’t be a problem when paired with map-localize-plan techniques as a direction to move through the intersection would be chosen for it using the location on the map.

As you can see in the video below, it not only does a decent job of flying down lanes but it also flies well in a parking garage and a hallway, even though it wasn’t trained for either of these.

Continue reading “Delivery Drones Can Learn From Driving And Cycling”