Reinforcement learning is a subset of machine learning where the machine is scored on their performance (“evaluation function”). Over the course of a training session, behavior that improved final score is positively reinforced gradually building towards an optimal solution. [Dheera Venkatraman] thought it would be fun to use reinforcement learning for making a little robot lamp move. But before that can happen, he had to build the hardware and prove its basic functionality with a manual test script.

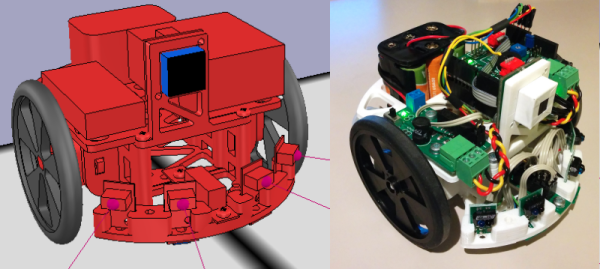

Inspired by the hopping logo of Pixar Animation Studios, this particular form of locomotion has a few counterparts in the natural world. But hoppers of the natural world don’t take the shape of a Luxo lamp, making this project an interesting challenge. [Dheera] published all of his OpenSCAD files for this 3D-printed lamp so others could join in the fun. Inside the lamp head is a LED ring to illuminate where we expect a light bulb, while also leaving room in the center for a camera. Mechanical articulation servos are driven by a PCA9685 I2C PWM driver board, and he has written and released code to interface such boards with Robot Operating System (ROS) orchestrating our lamp’s features. This completes the underlying hardware components and associated software foundations for this robot lamp.

Once all the parts have been printed, electronics wired, and everything assembled, [Dheera] hacked together a simple “Hello World” script to verify his mechanical design is good enough to get started. The video embedded after the break was taken at OSH Park’s Bring-A-Hack afterparty to Maker Faire Bay Area 2019. This motion sequence was frantically hand-coded in 15 minutes, but these tentative baby hops will serve as a great baseline. Future hopping performance of control algorithms trained by reinforcement learning will show how far this lamp has grown from this humble “Hello World” hop.

[Dheera] had previously created the shadow clock and is no stranger to ROS, having created the ROS topic text visualization tool for debugging. We will be watching to see how robot Luxo will evolve, hopefully it doesn’t find a way to cheat! Want to play with reinforcement learning, but prefer wheeled robots? Here are a few options.