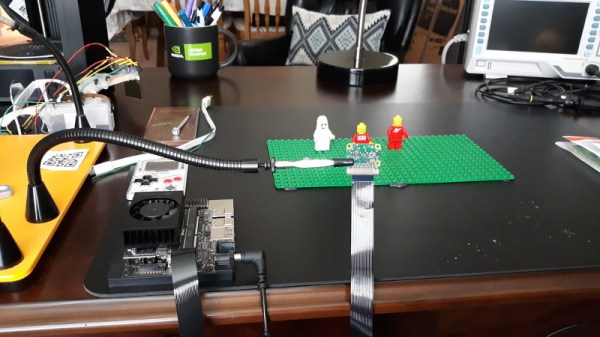

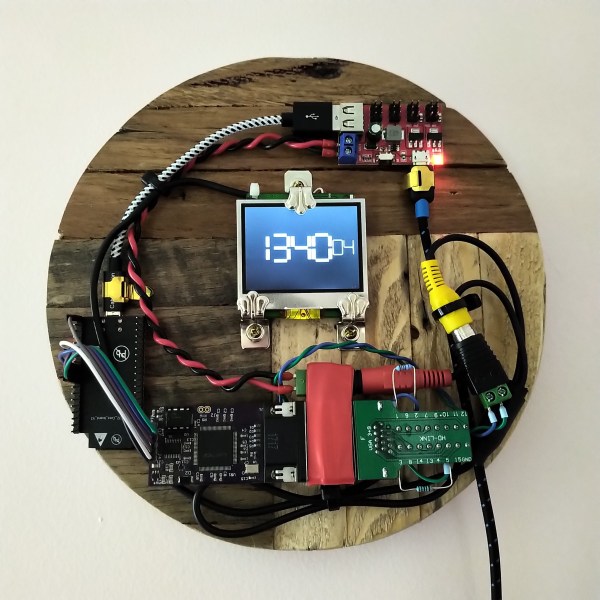

Despite the best efforts of scientists around the world, the current global pandemic continues onward. But even if you aren’t working on a new vaccine or trying to curb the virus with some other seemingly miraculous technology, there are a few other ways to help prevent the spread of the virus. By now we all know of ways to do that physically, but now thanks to [James Devine] and a team at CERN we can also model virus exposure directly on our own self-hosted Raspberry Pis.

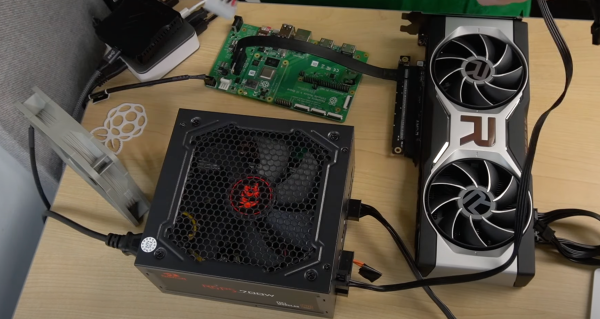

The program, called the Covid-19 Airborne Risk Assessment (CARA), is able to take in a number of metrics about the size and shape of an area, the number of countermeasures already in place, and plenty of other information in order to provide a computer-generated model of the number of virus particles predicted as a function of time. It can run on a number of different Pi hardware although [James] recommends using the Pi 4 as the model does take up a significant amount of computer resources. Of course, this only generates statistical likelihoods of virus transmission but it does help get a more accurate understanding of specific situations.

For more information on how all of this works, the group at CERN also released a paper about their model. One of the goals of this project is that it is freely available and runs on relatively inexpensive hardware, so hopefully plenty of people around the world are able to easily run it to further develop understanding of how the virus spreads. For other ways of using your own computing power to help fight Covid, don’t forget about Folding@Home for using up all those extra CPU and GPU cycles.