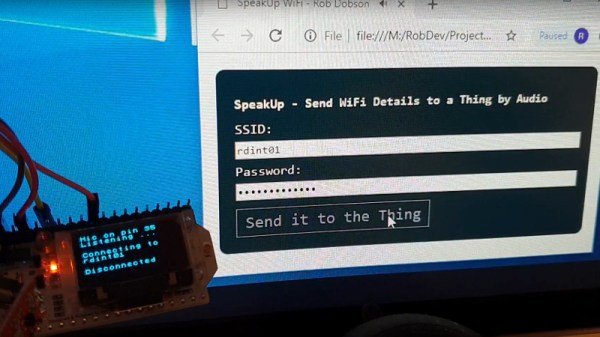

If you’ve followed along with our series so far, you know we’ve set up a network of Raspberry Pis that PXE boot off a central server, and then used Zoneminder to run a network of IP cameras. Now that some useful services are running in our smart house, how do we access those services when away from home, and how do we keep the rest of the world from spying on our cameras?

Before we get to VPNs and port forwarding, there is a more fundamental issue: Do you trust your devices? What exactly is the firmware on those cheap cameras really doing? You could use Wireshark and a smart switch with port mirroring to audit the camera’s traffic. How much traffic would you need to inspect to feel confident the camera never sends your data off somewhere else?

Thankfully, there’s a better way. One of the major features of surveillance software like Zoneminder is that it aggregates the feeds from the cameras. This process also has the effect of proxying the video feeds: We don’t connect directly to the cameras in order to view them, we connect to the surveillance software. If you don’t completely trust those cameras, then don’t give them internet access. You can make the cameras a physically separate network, only connected to the surveillance machine, or just set their IP addresses manually, and don’t fill in the default route or DNS. Whichever way you set it up, the goal is the same: let your surveillance software talk to the cameras, but don’t let the cameras talk to the outside world.

Edit: As has been pointed out in the comments, leaving off a default route is significantly less effective than separate networks. A truly malicious peice of hardware could easily probe for the gateway.

This idea applies to more than cameras. Any device that doesn’t need internet access to function, can be isolated in this way. While this could be considered paranoia, I consider it simple good practice. Join me after the break to discuss port forwarding vs. VPNs.

Continue reading “Hack My House: Opening Raspberry Pi To The Internet, But Not The Whole World”