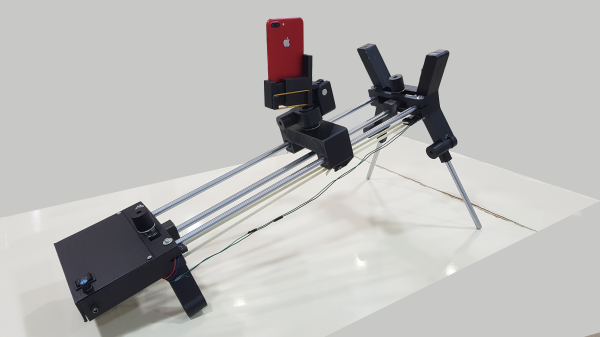

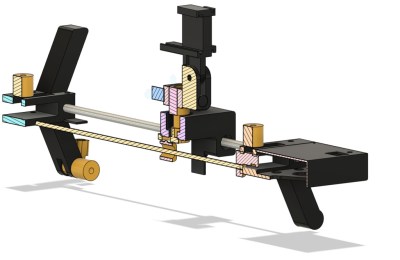

Historically, moving and pointing a camera while filming was the job of a highly-skilled individual. However, there are machines that can do that, enabling all kinds of fancy movement that is difficult or impossible for a human to recreate. A great example is this pan-tilt build from [immofoto3d.]

The build uses a hefty cradle to mount DSLR-size cameras or similar. It’s controlled in the tilt axis by a chunky NEMA 17 stepper motor hooked up to a belt drive for smooth, accurate movement. Similarly, another stepper motor handles the pan axis, with an option for upgrade if you have a heavier camera rig that needs more torque to spin easily. Named Gantry Bot, it’s an open-source design with source files available, so you can make any necessary tweaks on your own. You will have to bring your own control mechanism, though—telling the stepper motors what to do and how fast to do it is up to you.

It’s a heavy-duty build, this one, and you’ll really want a decent metal-capable CNC to get it done, along with a 3D printer for all the plastic pieces. With that said, we’ve featured some other similar builds that might be more accessible if you don’t have a hardcore machine shop in the basement. If you’ve got your own impressive motion rig in the works, be sure to notify the tipsline!