The Raspberry Pi is famous for its low cost, versatile and open Linux environment, and plentiful I/O, making it a perfect device not only for its originally-intended educational purposes but for basically every hobbyist from gardeners to roboticists to amateur radio operators. Most builds tend to make use of the GPIO pins which allow easy connections to various peripherals and sensors, but the Pi also supports PCI devices which means that, in theory, it could use a GPU in much the same way that a modern computer would. After plenty of testing and development, [Jeff Geerling] brings us this custom graphics card interface for the Raspberry Pi.

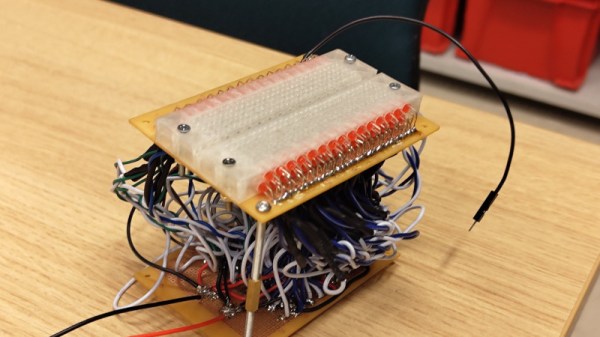

The testing for all of these graphics cards has been done with a Pi Compute Module 4 and the end result is an interface device which looks much like a graphics card itself. It splits the PCI bus out onto a more familiar x16 slot connector and adds physical connections for power, USB, and Ethernet. When plugged into the carrier board, the Compute Module can be attached to any of a number of graphics cards, including the latest and highest-end of Nvidia and AMD offerings.

Perhaps unsurprisingly, though, the 4090 and 7900 cards don’t work with the Raspberry Pi. This is partially due to the 32-bit limitations of the Pi and other memory mapping issues, but even after attempting some workarounds Nvidia’s cards aren’t open-source enough to test properly (although the card is recognized by the Pi) and AMD’s drivers crash the system even after compiling a custom kernel. [Jeff] did find an Nvidia card that worked, although it requires using the USB interface and second-hand cards are selling for around $3000 USD. For a more economical choice there are some other graphics cards that he was eventually able to get working, albeit not with perfect performance, including some of the ones we’ve seen him test already.