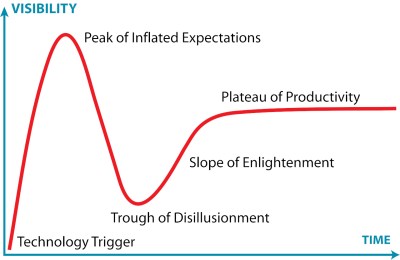

There’s been a lot of virtual ink spilled in environmental circles about the cooling water requirements of data centers, but less consideration of what happens with all the heat coming out of these buildings. Naturally, it’s going to warm the surrounding environment, but how much? Around 2 C (3.6 F) on average, and potentially much more than that, according to a recent study on the data heat island effect.

It’s common sense, of course: heat removed from the data center doesn’t go away. That heat might go into a body of water if one is available, but otherwise it’s out into the atmosphere to warm up everybody else’s day. In some places — like a Canadian winter — that might not be so bad. In others, where climate change and urban heat islands are cranking up the summertime temperatures, it very much could be. Especially if you’re in the worst-case scenario micro-climate described by the paper, which saw a predicted increase of 9.1 C (16 F).

Now, these results are theoretical and need to be ground-truthed, but anyone who has huddled next to the air-exchange unit of a large building for warmth knows there’s something to them. Unfortunately there don’t seem to be before-and-after measurements available for existing data-centers — AI or otherwise — to show exactly what their heat output is doing in the real world, but the urban heat island effect from all the dark asphalt in our cities is well known. Cooling paint and green roofs can help with that, but they won’t do much for the megawatts being pumped out to keep your cousin’s AI girlfriend online.

Some would argue that all this heat wouldn’t be a problem if we could launch the data centers outside the environment — just have a care the front doesn’t fall off.