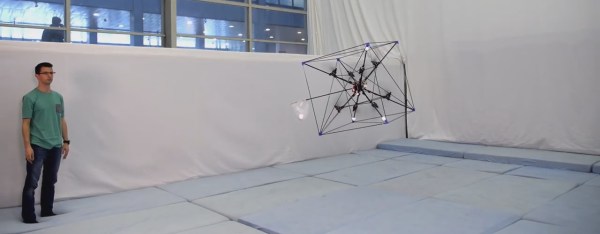

We don’t know how much time passed between the invention of the wheel and someone putting wheels on their feet, but we expect that was a great moment of discovery: combining the ability to roll off at speed and our leg’s ability to quickly adapt to changing terrain. Now that we have a wide assortment of recreational wheeled footwear, what’s next? How about teaching robots to skate, too? An IEEE Spectrum interview with [Marko Bjelonic] of ETH Zürich describes progress by one of many research teams working on the problem.

For many of us, the first robot we saw rolling on powered wheels at the end of actively articulated legs was when footage of the Boston Dynamics ‘Handle’ project surfaced a few years ago. Rolling up and down a wide variety of terrain and performing an occasional jump, its athleticism caused quite a stir in robotics circles. But when Handle was introduced as a commercial product, its job was… stacking boxes in a warehouse? That was disappointing. Warehouse floors are quite flat, leaving Handle’s agility under-utilized.

Boston Dynamic has typically been pretty tight-lipped on details of their robotics development, so we may never know the full story behind Handle. But what they have definitely accomplished is getting a lot more people thinking about the control problems involved. Even for humans, we face a nontrivial learning curve paved with bruised and occasionally broken body parts, and that’s even before we start applying power to the wheels. So there are plenty of problems to solve, generating a steady stream of research papers describing how robots might master this mode of locomotion.

Adding to the excitement is the fact this is becoming an area where reality is catching up to fiction, as wheeled-legged robots have been imagined in forms like Tachikoma of Ghost in the Shell. While those fictional robots have inspired projects ranging from LEGO creations to 28-servo beasts, their wheel and leg motions have not been autonomously coordinated as they are in this generation of research robots.

Adding to the excitement is the fact this is becoming an area where reality is catching up to fiction, as wheeled-legged robots have been imagined in forms like Tachikoma of Ghost in the Shell. While those fictional robots have inspired projects ranging from LEGO creations to 28-servo beasts, their wheel and leg motions have not been autonomously coordinated as they are in this generation of research robots.

As control algorithms mature in robot research labs around the world, we’re confident we’ll see wheeled-legged robots finding applications in other fields. This concept is far too cool to be left stacking boxes in a warehouse.

Continue reading “Legged Robots Put On Wheels And Skate Away”