Observing a colony, swarm or similar grouping of creatures like ants or zebrafish over longer periods of time can be tricky. Simply recording their behavior with a camera misses a lot of information about the position of their body parts, while taking precise measurements using a laser-based system or LiDAR suffers from a reduction in parameters such as the resolution or the update speed. The ideal monitoring system would be able to record at high data rates and resolutions, while presenting the recorded data all three dimensions. This is where the work by Kevin C. Zhou and colleagues seeks to tick all the boxes, with a recent paper (preprint, open access) in Nature Photonics describing their 3D-RAPID system.

This system features a 9×6 camera grid, making for a total of 54 cameras which image the underlying surface. With 66% overlap between cameras across the horizontal dimension, there enough duplicate data between image stream that is subsequently used in the processing step to extract and reconstruct the 3D features, also helped by the pixel pitch of between 9.6 to 38.4 µm. The software is made available via the author’s GitHub.

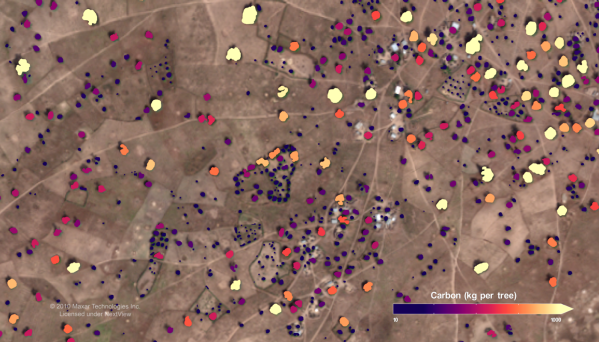

Three configurations for the imaging are possible, ranging from no downsampling (1x) for 13,000×11,250 resolution at 15 FPS, to 2x downsampling (6,500×5,625@60FPS) and finally 4x (3,250×2,810@230FPS). Depending on whether the goal is to image finer features or rapid movement, this gives a range of options before the data is fed into the computational 3D reconstruction and stitching algorithm. This uses the overlap between the distinct frames to reconstruct the 3D image, which in this paper is used together with a convolutional neural network (CNN) to automatically determine for example how often the zebrafish are near the surface, as well as the behavior of fruit flies and harvester ants.

As noted in an interview with the authors, possible applications could be found in developmental biology as well as pharmaceutics.