Mosquitoes tend to be seen as an almost universal negative, at least in the lives of humans. While they serve as a food source for plenty of other animals and may even pollinate some plants, they also carry diseases like malaria and Zika, not to mention the itchy bites. Various mosquito deterrents have been invented over the years to solve some of these problems, but one of the more interesting ones is this project by [Ildaron] which attempts to build a mosquito-tracking laser.

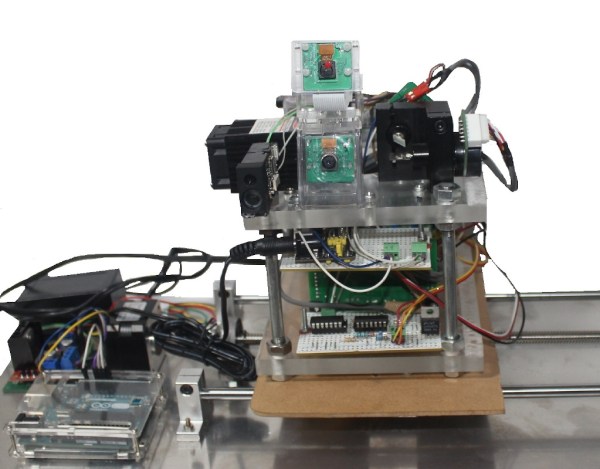

The device uses a neural learning algorithm to identify mosquitoes flying nearby. Once a mosquito is detected, a laser is aimed at it and activated in order to “thermally neutralize” the pest. The control system as well as the neural network and machine learning are hosted on a Raspberry Pi and Jetson Nano which give it plenty of computing power. The only major downside with this specific project is that the high-powered laser can be harmful to humans as well.

Ideally, a market for devices like these would bring the price down, perhaps even through the use of something like an ASIC specifically developed for these mosquito-targeting machines. In the meantime, [Ildaron] has made this project available for replication on his GitHub page. We have also seen similar builds before which are effective against non-flying insects, so it seems like only a matter of time before there is more widespread adoption — either that or Judgement day!