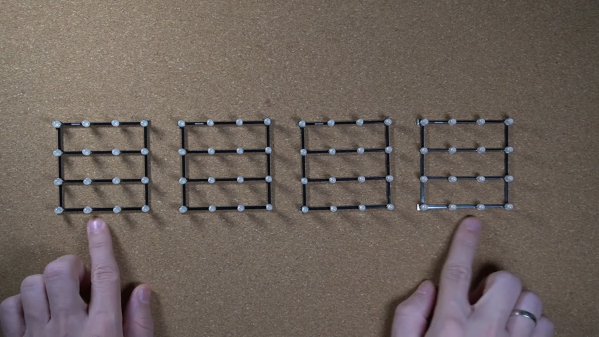

[Wendy] asked a very good question. Could putting liquid resin into an ultrasonic cleaner help degas it? Would it help remove bubbles, resulting in a cleaner pour and nicer end product? What we love is that she tried it out and shared her results. She purchased an ultrasonic cleaner and proceeded to mix two batches of clear resin, giving one an ultrasonic treatment and leaving the other untouched as a control.

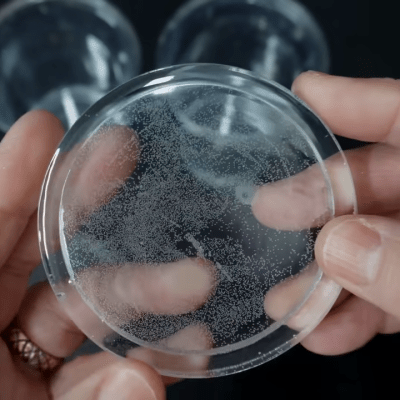

The results were interesting and unexpected. Initially, the resin in the ultrasonic bath showed visible bubbles rising to the surface which seemed promising. Unfortunately, this did not lead to fewer bubbles in the end product.

[Wendy]’s measurements suggest that the main result of putting resin in an ultrasonic bath was an increase in its temperature. Overheating the resin appears to have led to increased off-gassing and bubble formation prior to and during curing, which made for poor end results. The untreated resin by contrast cured with better color and much higher clarity. If you would like to skip directly to the results of the two batches, it’s right here at 9:15 in.

Does this mean it’s a total dead end? Maybe, but even if the initial results weren’t promising, it’s a pretty interesting experiment and we’re delighted to see [Wendy] walk through it. Do you think there’s any way to use the ultrasonic cleaner in a better or different way? If so, let us know in the comments.

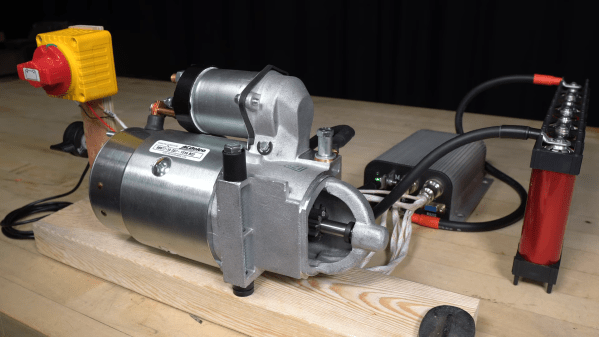

This isn’t the first time people have tried to degas epoxy resin by thinking outside the box. We’ve covered a very cheap method that offered surprising results, as well as a way use a modified paint tank in lieu of purpose-made hardware.