Like most waves in the electromagnetic spectrum, radio waves tend to bounce off of various objects. This can be frustrating to anyone trying to use something like a GMRS or LoRa radio in a dense city, for example, but these reflections can also be exploited for productive use as well, most famously by radar. Radar has plenty of applications such as weather forecasting and various military uses. With some software-defined radio tools, it’s also possible to use radar for tracking aircraft in real-time at home like this DIY radar system.

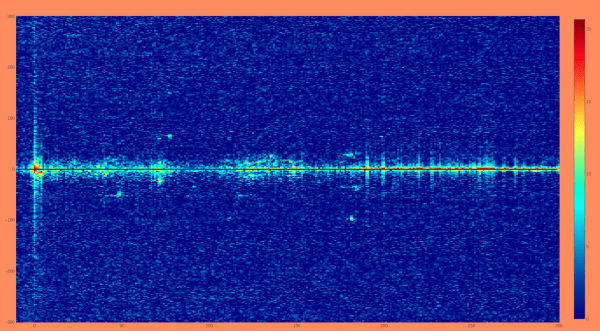

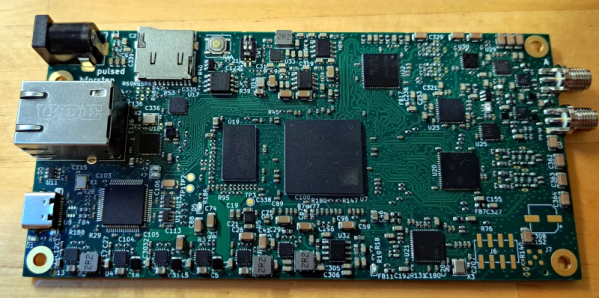

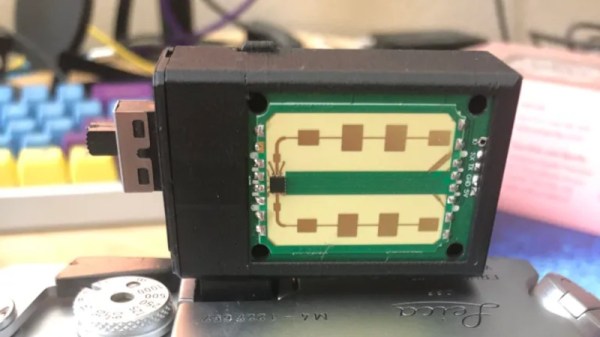

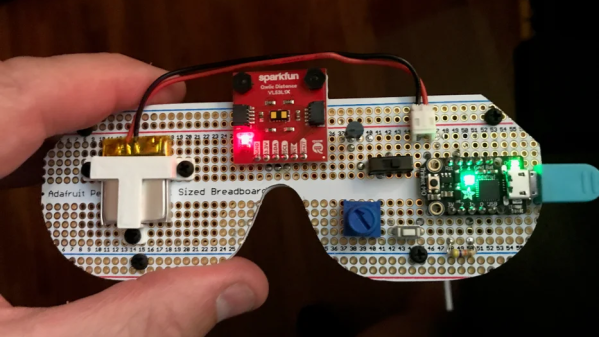

Unlike active radar systems which use a specific radio source to look for reflections, this system is a passive radar system that uses radio waves already present in the environment to track objects. A reference antenna is used to listen to the target frequency, and in this installation, a nine-element Yagi antenna is configured to listen for reflections. The radio waves that each antenna hears are sent through a computer program that compares the two to identify the reflections of the reference radio signal heard by the Yagi.

Even though a system like this doesn’t include any high-powered active elements, it still takes a considerable chunk of computing resources and some skill to identify the data presented by the software. [Nathan] aka [30hours] gives a fairly thorough overview of the system which can even recognize helicopters from other types of aircraft, and also uses the ADS-B monitoring system as a sanity check. Radar can be used to monitor other vehicles as well, like this 24 GHz radar module found in some modern passenger vehicles.

Continue reading “DIY Passive Radar System Verifies ADS-B Transmissions”