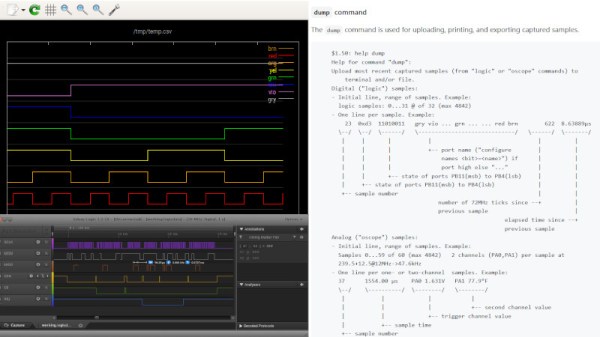

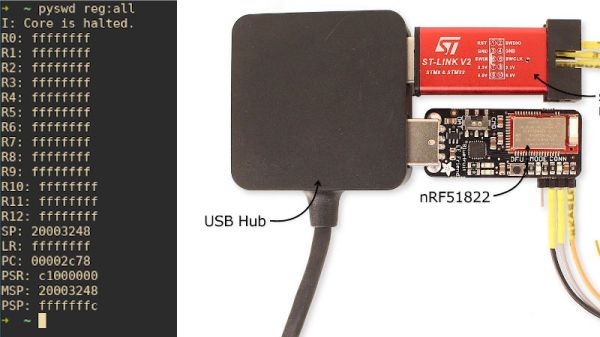

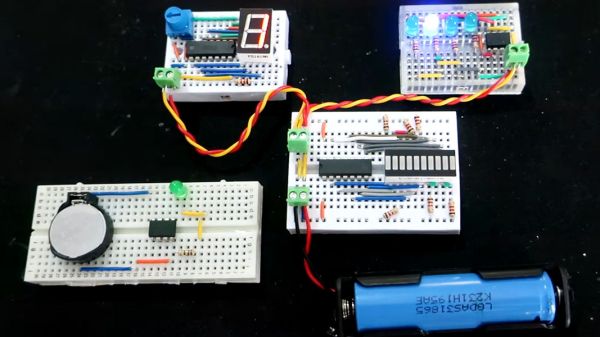

Not everyone can afford an oscilloscope, and some of us can’t find a USB logic analyzer half the time. But we can usually get our hands on a microcontroller kit, which can be turned into a makeshift instrument if given the appropriate code. A perfect example is buck50 developed by [Mark Rubin], an open source firmware to turn a STM32 “Blue Pill” into a multi-purpose test and measurement instrument.

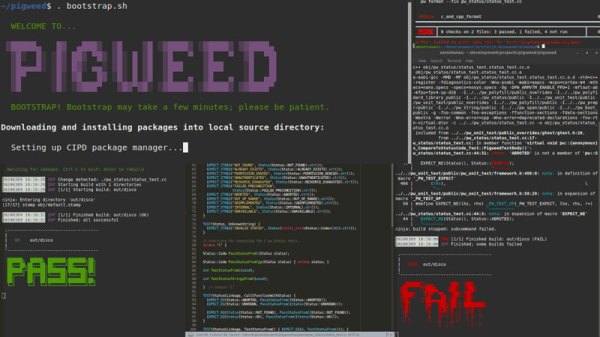

buck50 comes with a plethora of functionality built in which includes an oscilloscope, logic analyzer, and bus monitor. The device is a two way street and also comes with GPIO control as well as PWM output. There’s really a remarkable amount of functionality crammed into the project. [Mark] provides a Python application that exposes a text based UI for configuring and using the device though commands and lots of commands which makes this really nerdy. There are a number of options to visualize the data captured which includes gnuplot, gtk wave and PulseView to name a few.

[Mark] does a fantastic job not only with the firmware but also with the documentation, and we really think this makes the project stand out. Commands are well documented and everything is available on [GitHub] for your hacking pleasure. And if you are about to order a Blue Pill online, you might want to check out the nitty-gritty of the clones that are floating around.

Thanks [JohnU] for the tip!

Google

Google