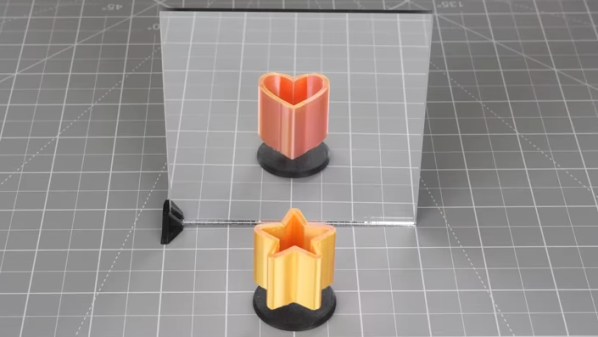

“Under-door” style attacks are when an attacker slides a tool through the gap underneath a door, hooks the interior handle from below, and opens the door by pulling the handle downward. This kind of attack works on the sort of doors and locks commonly found in hotels, where turning the handle from the inside always results in an open door. [Michal Jirků] found himself in a hotel room with a particularly large gap underneath the door, and decided to quickly design and print a door guard to protect against just such an attack.

It’s a simple object, and twenty minutes of printing and a little double-sided tape is all it takes to deploy. Because an attacker performs an under-door attack with a sizable mechanical disadvantage, it doesn’t take much to frustrate the attempt, and that’s exactly what the object does. Physical security in hotels is especially important, after all, and crooks have been known to exploit known flaws like the face-palmingly bad Onity key card lock exploit.

If you’re having trouble picturing how it all works, this video demonstrates an under-door attack in action, so you can see how blocking the space by the handle would easily prevent the tool from getting where it needs to go.