It’s getting easier and easier to add machine intelligence to your hacks, even to the point where you sometimes don’t have to install any special software. In this case [Dexter Industries] has added the ability to read human emotions to their EmpathyBot robot by making use of Google Cloud Vision.

Press a button on the robot and it moves forward until it’s a certain distance from an object. It then takes a picture and sends it off to Google Cloud Vision along with a request to do face detection. The response that Google returns is in JSON format and, if it finds a face, includes the likelihood of the face being happy, sad, sorrowful or surprised. The robot parses that response and gives an appropriate canned speech using the text-to-speech software, eSpeak e.g. “You seem happy! Tell me why you are so happy!”.

[Dexter] has made the source code available on github. It’s written in python and is easy to read by anyone with even just a little programming experience. The video after the break gives a number of demonstrations, including some with non-human subjects.

Continue reading “Raspberry Pi Robot That Reads Your Emotions”

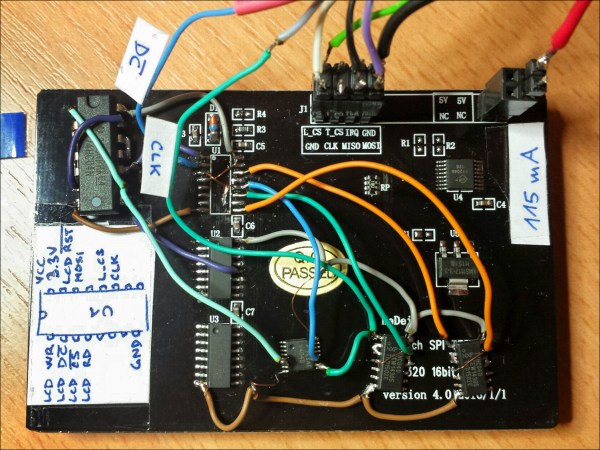

There are 480 LEDs in his display, and he addresses them through TLC5927 shift registers. Synchronisation is provided by a Hall-effect sensor and magnet to detect the start of each rotation, and the Teensy adjusts its pixel rate based on that timing. He’s provided extremely comprehensive documentation with code and construction details in the GitHub repository, including

There are 480 LEDs in his display, and he addresses them through TLC5927 shift registers. Synchronisation is provided by a Hall-effect sensor and magnet to detect the start of each rotation, and the Teensy adjusts its pixel rate based on that timing. He’s provided extremely comprehensive documentation with code and construction details in the GitHub repository, including