Now that we drive around cars that are more like mobile data centers than simple transportation, there’s a wealth of data to be harvested when the inevitable crashes occur. After a recent Tesla crash on a California highway, a security researcher got a hold of the car’s “black box” and extracted some terrifying insights into just how bad a car crash can be. The interesting bit is the view of the crash from the Tesla’s forward-facing cameras with object detection overlays. Putting aside the fact that the driver of this car was accelerating up to the moment it rear-ended the hapless Honda with a closing speed of 63 MPH (101 km/h), the update speeds on the bounding boxes and lane sensing are incredible. The author of the article uses this as an object lesson in why Level 2 self-driving is a bad idea, and while I agree with that premise, the fact that self-driving had been disabled 40 seconds before the driver plowed into the Honda seems to make that argument moot. Tech or not, someone this unskilled or impaired was going to have an accident eventually, and it was just bad luck for the other driver.

Last week I shared a link to Scan the World, an effort to 3D-scan and preserve culturally significant artifacts and create a virtual museum. Shortly after the article ran we got an email from Elisa at Scan the World announcing their “Unlocking Lockdown” competition, which encourages people to scan cultural artifacts and treasures directly from their home. You may not have a Ming Dynasty vase or a Grecian urn on display in your parlor, but you’ve probably got family heirlooms, knick-knacks, and other tchotchkes that should be preserved. Take a look around and scan something for posterity. And I want to thank Elisa for the link to the Pompeiian bread that I mentioned.

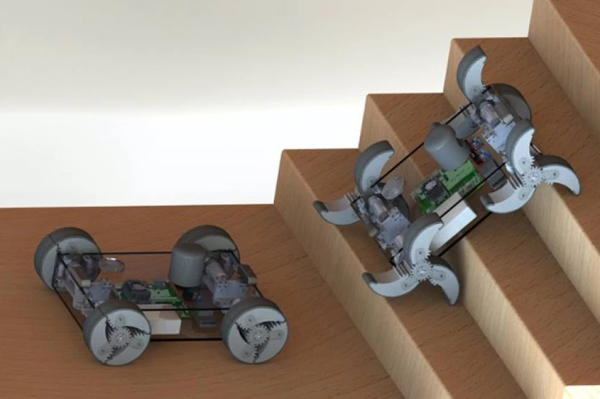

The Defense Advanced Research Projects Agency (DARPA)has been running an interesting challenge for the last couple of years: The Subterranean (SubT) Challenge. The goal is to discover new ways to operate autonomously below the surface of the Earth, whether for mining, search and rescue, or warfare applications. They’ve been running different circuits to simulate various underground environments, with the most recent circuit being a cave course back in October. On Tuesday November 17, DARPA will webcast the competition, which features 16 teams and their autonomous search for artifacts in a virtual cave. It could make for interesting viewing.

If underground adventures don’t do it for you, how about going upstairs? LeoLabs, a California-based company that specializes in providing information about satellites, has a fascinating visualization of the planet’s satellite constellation. It’s sort of Google Earth but with the details focused on low-earth orbit. You can fly around the planet and watch the satellites whiz by or even pick out the hundreds of spent upper-stage rockets still up there. You can lock onto a specific satellite, watch for near-misses, or even turn on a layer for space debris, which honestly just turns the display into a purple miasma of orbiting junk. The best bit, though, is the easily discerned samba-lines of newly launched Starlink satellites.

A doorbell used to be a pretty simple device, but like many things, they’ve taken on added complexity. And danger, it appears, as Amazon Ring doorbell users are reporting their new gadgets going up in flame upon installation. The problem stems from installers confusing the screws supplied with the unit. The longer wood screws are intended to mount the device to the wall, while a shorter security screw secures the battery cover. Mix the two up for whatever reason, and the sharp point of the mounting screw can find the LiPo battery within, with predictable results.

And finally, it may be the shittiest of shitty robots: a monstrous robotic wolf intended to scare away wild bears. It seems the Japanese town of Takikawa has been having a problem with bears lately, so they deployed a pair of these improbable looking creatures to protect themselves. It’s hard to say what’s the best feature: the flashing LED eyes, the strobe light tail, the fact that the whole thing floats in the air atop a pole. Whatever it is, it seems to work on bears, which is probably good enough. Take a look in the video below the break.

Continue reading “Hackaday Links: November 15, 2020” →