Isn’t it convenient when your pick-and-place machine arrives with a fully-set-up computer inside of it? Plug in a keyboard, mouse and a monitor, and you have a production line ready to go. Turns out, you can have third parties partake in your convenience by sharing your private information with them – as long as you plug in an Ethernet cable! [Richard] from [RM Cybernetics] has purchased a ZhengBang ZB3245TSS machine, and in the process of setting it up, dutifully backed up its software onto a USB stick – as we all ought to.

This bit of extra care, often missed by fellow hackers, triggered an antivirus scanner alert, and subsequently netted some interesting results on VirusTotal – with 53/69 result for a particular file. That wasn’t conclusive enough – they’ve sent the suspicious file for an analysis, and the test came back positive. After static and dynamic analysis done by a third party, the malware was confirmed to collect metadata accessible to the machine and send it all to a third-party server. Having contacted ZhengBang about this mishap, they received a letter with assurances that the files were harmless, and a .zip attachment with replacement “clean” files which didn’t fail the antivirus checks.

It didn’t end here! After installing the “clean” files, they also ran a few anti-malware tools, and all seemed fine. Then, they plugged the flash drive into another computer again… to encounter even more alerts than before. The malware was equipped with a mechanism to grace every accessible .exe with a copy of itself on sight, infecting even .exe‘s of the anti-malware tools they put on that USB drive. The article implies that the malware could’ve been placed on the machines to collect your company’s proprietary design information – we haven’t found a whole lot of data to support that assertion, however; as much as it is a plausible intention, it could have been a case of an unrelated virus spread in the factory. Surprisingly, all of these discoveries don’t count as violations of Aliexpress Terms and Conditions – so if you’d like to distribute a bunch of IoT malware on, say, wireless routers you bought in bulk, now you know of a platform that will help you!

This goes in our bin of Pretty Bad News for makers and small companies. If you happen to have a ZhengBang pick-and-place machine with a built-in computer, we recommend that you familiarize yourself with the article and do an investigation. The article also goes into details on how to reinstall Windows while keeping all the drivers and software libraries working, but we highly recommend you worry about the impact of this machine’s infection spread mechanisms, first.

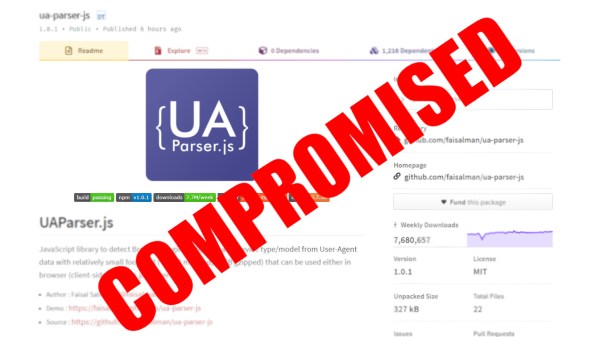

Supply chain attacks, eh? We’ve seen plenty of these lately, what’s with communities and software repositories being targeted every now and then. Malware embedded into devices from the factory isn’t a stranger to us, either – at least, this time we have way more information than we did when Supermicro was under fire.

Editor’s Note: As pointed out by our commenters, there’s currently not enough evidence to assert that Zhengbang’s intentions were malicious. The article has been edited to reflect the situation more accurately, and will be updated if more information becomes available.

Editor’s Note Again: A rep from Zhengbang showed up in the comments and claims that this was indeed a virus that they picked up and unintentionally passed on to the end clients.