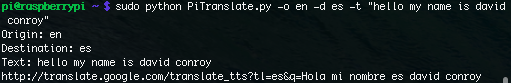

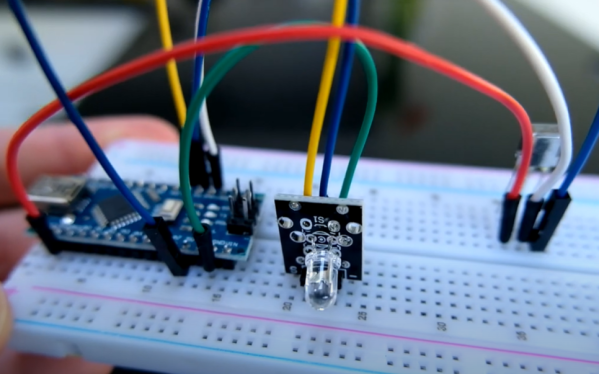

Universal remotes are a handy tool to have around if you have many devices that would all otherwise have their own remote controls. Merging them all into a single device leads to less clutter and less frustration, but they are often not truly “universal” as some of them may not support every infrared device that has ever been built. If you’re in a situation like that it’s possible to build a truly universal remote instead, provided you have a microcontroller and a few infrared LEDs on hand.

This was the situation that [Matt] found himself in when his Amazon Fire TV equipment control feature didn’t support his model of speakers. To get around this he programmed an Arduino to essentially translate the IR codes from the remote and output a compatible set of codes to the speakers.This requires both an IR photodiode and an IR LED but little else other than the codes for the remote and the equipment in question. With that all set up and programmed into the Aruino, [Matt]’s remote is one step closer to being truly “universal”.

While [Matt] was able to make use of existing codes in the Arduino library, it is also possible to capture the codes required manually by pointing a remote at a photodiode and programming a microcontroller to capture the codes that you need. [Matt] used a Raspberry Pi to do this when debugging this project, but we’ve also seen this method used with a similar build which uses an ESP8266 to control an air conditioner via its infrared remote control capabilities.

Continue reading “IR Translator Makes Truly Universal Remote”

A team of students in Antwerp, Belgium are responsible for

A team of students in Antwerp, Belgium are responsible for