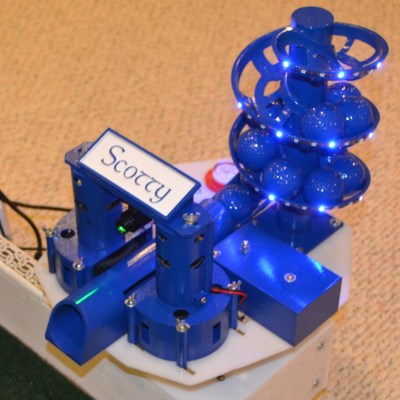

Inspired by the classic game Robo Rally, [Ytec3D]’s Clockwork Derby takes tabletop gaming to the next level by combining steampunk aesthetics with automation. We recently had the chance to see it live at Hackfest, together with [Ytec3D]’s animatronic tentacle, and we can say that his new take on playful robotics offers a unique experience for game enthusiasts. The 300×420 mm board uses magnets, motors, and card readers to handle up to eight players, creating a smooth, automated version of Robo Rally where players can focus on strategy while the board handles movement.

In Clockwork Derby, game pieces are moved by a magnetic system controlled by the board, which rotates and shifts pieces in real-time. Each player uses a card reader to program moves, with up to five cards per round. The board scans these cards via barcode scanners, so you don’t have to worry about tracking your moves or adjusting game pieces manually. [Ytec3D]’s game rules have been optimized for the automated setup, allowing for smoother gameplay and an emphasis on strategic choices.

The project is a standout for hackers and tinkerers who appreciate blending physical mechanics with digital precision. It’s a great example of how classic games can be modernized with a bit of ingenuity and tech. For those interested in DIY gaming projects or automation, Clockwork Derby is definitely worth exploring. To dive deeper into the build details and see more of the project, visit [Ytec3D]’s project page for an in-person look at this inventive tabletop game!

Continue reading “Clockwork Derby: Digital Robo Rally, Steampunk Style”