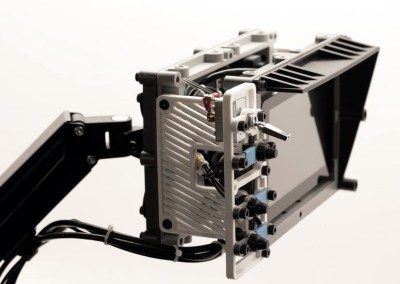

Inspired by films such as The Matrix, where hackers are surrounded by displays and keyboards on articulated arms, [Jay Doscher] created this cyberpunk “floating” terminal so your favorite Linux single board computer is always close at hand. Do you actually need such a thing mounted to the wall next to the workbench? Probably not. But when has that ever stopped a Hackaday reader?

[Jay] has come up with a modular design for the “A.R.M. Terminal” that allows the user to easily augment it with additional hardware. The 3D printed frame of the terminal has hardpoints to bolt on new modules, which thanks to threaded metal inserts, will have no problem surviving multiple configurations.

This initial version features a panel on the left side that holds various buttons and switches attached to the Pi’s GPIO pins. With a bit of code, it’s easy to pick up the status of these controls and use them to fire off whatever tasks your imagination can come up with. On the bottom [Jay] has mounted a stand-alone VFD audio spectrum display that’s hooked up to the Pi’s 3.5 mm jack. It’s totally unnecessary and costs as much as the Raspberry Pi itself, but it sure is pretty.

This initial version features a panel on the left side that holds various buttons and switches attached to the Pi’s GPIO pins. With a bit of code, it’s easy to pick up the status of these controls and use them to fire off whatever tasks your imagination can come up with. On the bottom [Jay] has mounted a stand-alone VFD audio spectrum display that’s hooked up to the Pi’s 3.5 mm jack. It’s totally unnecessary and costs as much as the Raspberry Pi itself, but it sure is pretty.

If there’s a downside to the design, it’s that the only display currently supported is the official Raspberry Pi touchscreen which is only 800×480 and a bit pricey compared to more modern panels. On the other hand, there’s something to be said for the standardized bolt pattern on the back of the official screen; so if you want to use a higher resolution display, be prepared to design your own mounting bracket. Extra points if you share your changes with the rest of the class.

For anyone who likes the look of the A.R.M. Terminal but isn’t too keen on being tethered to the wall, you’re in luck. [Jay] previously created the Raspberry Pi Recovery Kit which shares many of the same design principles but puts them into a ruggedized case that’s ready for life in the field.