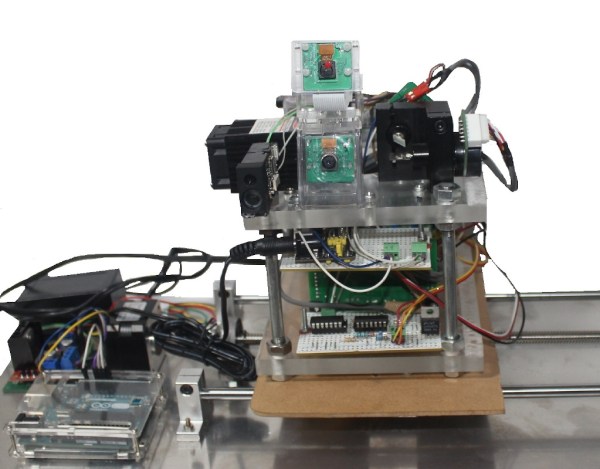

If you want to do some really advanced flying with drones, you typically need to be able to track them in space. [Joshua Bird] has whipped up a drone tracking system that can do the job for as little as $20 with millimeter-scale precision.

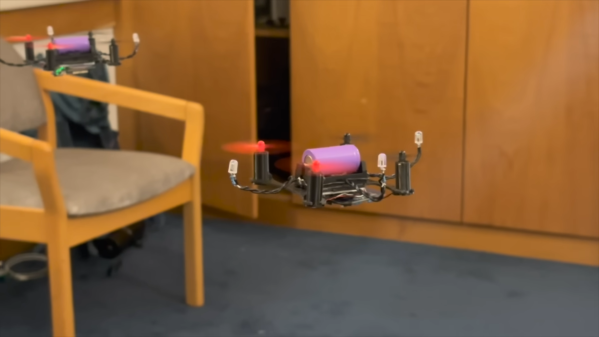

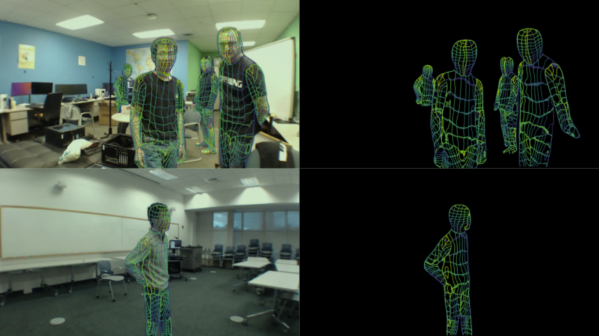

The system uses four PS3 Eye cameras which can be had second-hand at a cost of just $5 each. They’re modified by removing their IR cut filter, and putting in an IR-passing filter in the form of a cut-up slice of floppy disk. The system tracks the drones via their infrared indicators and the known locations of the four cameras themselves, which the system is capable of mapping out automatically. By using four cameras, the system is robust in the event the view of a camera is occluded. The system can track multiple drones at the same time, with [Joshua] demonstrating it working with two drones each carrying three infrared markers. He has the system set up to send positional updates to ESP32 microcontrollers on the drones themselves, which command the drones to hold them in set positions.

Code is available on GitHub for the curious. We’ve seen other similar work before, too.

Continue reading “Drone Motion Capture, The Open Source Way”