It’s amazing how hackers are nowadays building increasingly complex hardware with SMD parts as small as grains of sand. Getting multilayer PCB’s and soldering stencils in small quantities for prototyping is easier than ever before. But Pick-and-Place — the process of taking parts and stuffing them on the PCB in preparation for soldering — is elusive, for several reasons. For one, it makes sense only if you plan to do volume production as the cost and time for just setting up the PnP machine for a small run is prohibitive. And a desktop PnP machine isn’t yet as ubiquitous as a 3D printer. Placing parts on the board is one process that still needs to be done manually. Just make sure you don’t sneeze when you’re doing it.

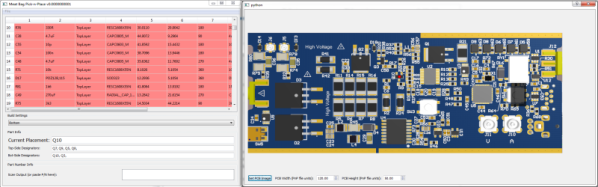

Of course the human is the slow part of this process. [Colin O’Flynn] wrote a python script that he calls MeatBagPnP to ease this bottleneck. It’s designed to look at a row in a parts position file generated from your EDA program and highlight on a render of the board where that part needs to be placed. The human then does what a robotic PnP would have done.

A bar code scanner is not necessary, but using one does make the process a bit quicker. When you scan a code on the part bag, the script highlights the row on the spreadsheet and puts a marker on the first instance of it on the board. After you’ve placed the part, pressing the space bar puts a marker on the next instance of the same value. The script shows it’s done after all parts of the same value are populated and you can then move on to the next part. If you don’t have a bar code scanner handy, you can highlight a row manually and it’ll tell you where to put that part. Check it out in the video below.

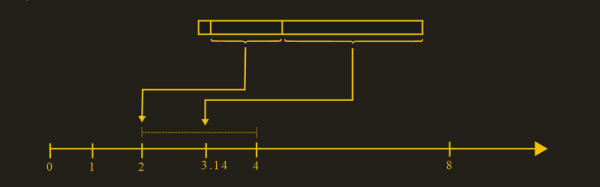

Of course, before you use this tool you need some prior preparation. You need a good PNG image of the board (both sides if it is double-sided) scaled so that it is the same dimensions as the target board. The parts position file generated from your EDA tool must use the lower left corner of the board as the origin. You then tell the tool the board dimensions and it scales up everything so that it can put the red markers at the designated XY positions. The script works for single and double-sided boards. For a board with just a few parts, it may not be worth the trouble of doing this, but if you are trying to manually populate a complex board with a lot of parts, using a script like this could make the process a lot less painful.

The project is still fresh and rough around the edges, so if you have comments or feedback to offer, [Colin] is listening.

[Colin]’s name ought to ring a bell — he’s the hacker who built ChipWhisperer which took 2nd Prize at The Hackaday Prize in 2014. The MeatBagPnP project is a result of having worked at building increasingly complex boards manually and trying to make the process easier. In addition to the walk-through of how the script works after the break we’ve embedded his other video from three years back when he was stuffing parts — including BGA’s — the hard way and then reflowing them in a Chinese oven with hacked firmware.

Continue reading “MeatBagPnP Makes You The Automatic Pick And Place”