Software-defined radio is all the rage these days, and for good reason. It eliminates or drastically reduces the amount of otherwise pricey equipment needed to transmit or even just receive, and can pack many more features than most affordable radio setups otherwise would have. It also makes it possible to go mobile much more easily. [Rostislav Persion] uses a laptop for on-the-go SDR activities, and designed this 3D printed antenna mount to make his radio adventures much easier.

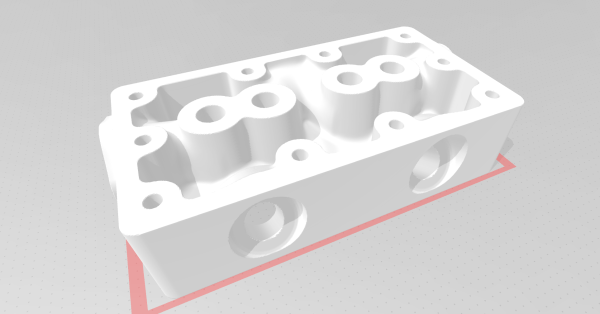

The antenna mount is a small 3D printed enclosure for his NESDR Smart Dongle with a wide base to attach to the back of his laptop lid with Velcro so it can easily be removed or attached. This allows him to run a single USB cable to the dongle and have it oriented properly for maximum antenna effectiveness without something cumbersome like a dedicated antenna stand. [Rostislav] even modeled the entire assembly so that he could run a stress analysis on it, and from that data ended up filling it with epoxy to ensure maximum lifespan with minimal wear on the components.

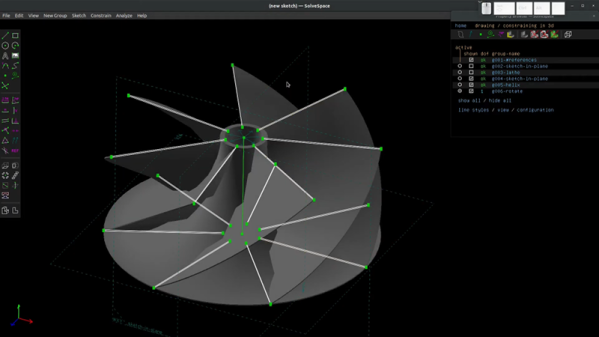

We definitely appreciate the simple and clean build which allows easy access to HF and higher frequencies while mobile, especially since the 3D modeling takes it a step beyond simply printing a 3D accessory and hoping for the best. There’s even an improved version on his site here. To go even one step further, though, we’ve seen the antennas themselves get designed and then 3D printed directly.

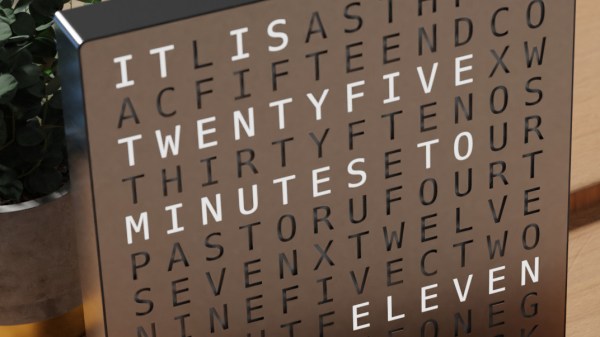

Inspired by the picture of a commercially available word clock, [Yasa] remembered the fun he had back in 2012 when he made

Inspired by the picture of a commercially available word clock, [Yasa] remembered the fun he had back in 2012 when he made