Many scifi robots have taken the form of their creators. In the increasingly blurry space between the biological and the mechanical, researchers have found a way to affix human skin to robot faces. [via NewScientist]

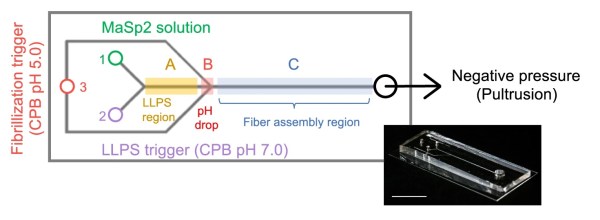

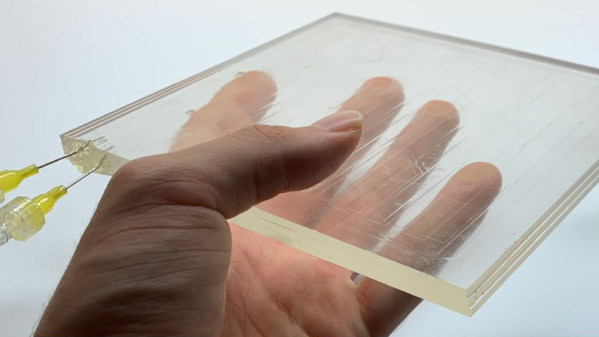

Previous attempts at affixing skin equivalent, “a living skin model composed of cells and extracellular matrix,” to robots worked, even on moving parts like fingers, but typically relied on protrusions that impinged on range of motion and aesthetic concerns, which are pretty high on the list for robots designed to predominantly interact with humans. Inspired by skin ligaments, the researchers have developed “perforation-type anchors” that use v-shaped holes in the underlying 3D printed surface to keep the skin equivalent taut and pliable like the real thing.

The researchers then designed a face that took advantage of the attachment method to allow their robot to have a convincing smile. Combined with other research, robots might soon have skin with touch, sweat, and self-repair capabilities like Data’s partial transformation in Star Trek: First Contact.

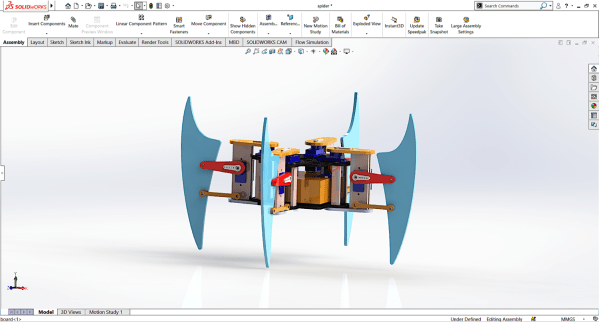

We wonder what this extremely realistic humanoid hand might look like with this skin on the outside. Of course that raises the question of if we even need humanoid robots? If you want something less uncanny, maybe try animating your stuffed animals with this robotic skin instead?