There was a time not too long ago when “LOL” actually meant something online. If someone went through the trouble of putting LOL into an email or text, you could be sure they were actually LOL-ing while they were typing — it was part of the social compact that made the Internet such a wholesome and inviting place. But no more — LOL has been reduced to a mere punctuation mark, with no guarantee that the sender was actually laughing, chuckling, chortling, or even snickering. What have we become?

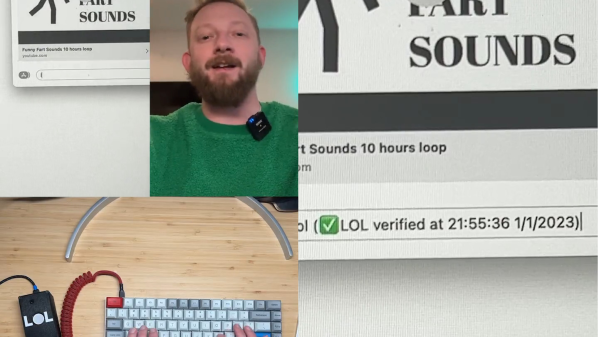

To put an end to this madness, [Brian Moore] has come up with the LOL verifier. Like darn near every project we see these days, it uses a machine learning algorithm — EdgeImpulse in this case. It detects a laugh by comparing audio input against an exhaustive model of [Brian]’s jocular outbursts — he says it took nearly three full minutes to collect the training set. A Teensy 4.1 takes care of HID duties; if a typed “LOL” correlates to some variety of laugh, the initialism is verified with a time and date stamp. If your LOL was judged insincere – well, that’s on you. See what you think of the short video below — we genuinely LOL’d. And while we’re looking forward to a ROTFL verifier, we’re not sure we want to see his take on LMAO.

Hats off to [Brian] for his attempt to enforce some kind of standards online. You may recall his earlier attempt to make leaving Zoom calls a little less awkward, which we also appreciate.

Continue reading “Machine Learning Makes Sure Your LOLs Are Genuine”