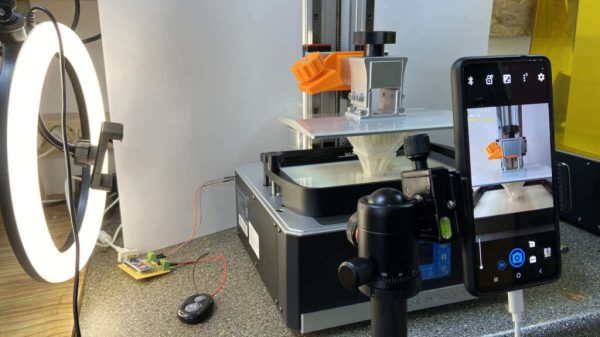

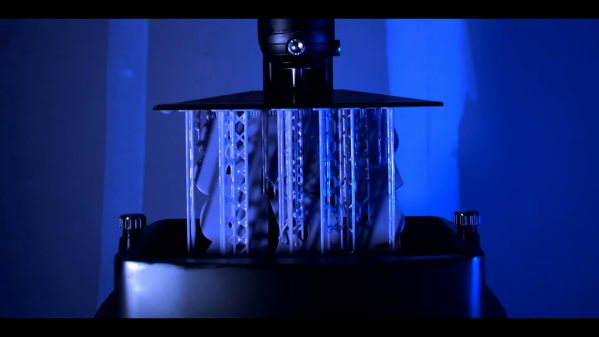

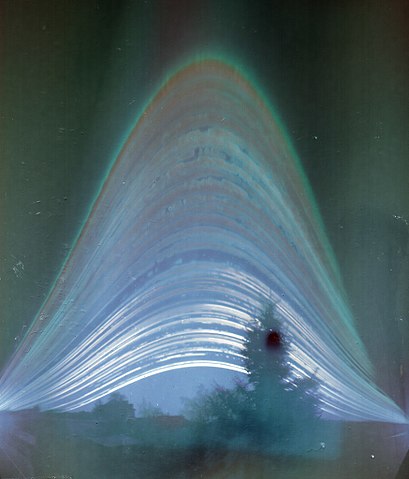

We know you’ve seen them: the time-lapses that show a 3D print coming together layer-by-layer without the extruder taking up half the frame. It takes a little extra work compared to just pointing a camera at the build plate, but it’s worth it to see your prints materialize like magic.

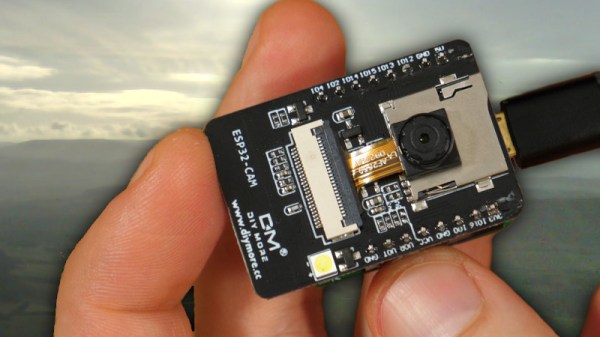

Usually these are done with a plugin for OctoPrint, but with all due respect to that phenomenal project, it’s a lot to get set up if you just want to take some pretty pictures. Which is why [Whopper Printing] put together the LayerLapse. This small PCB is designed to trigger your DSLR or mirrorless camera once its remotely-mounted hall effect sensor detects the presence of a magnet.

The idea is that you just need to stick a small magnet to your extruder, add a bit of extra G-code that will park it over the sensor at the end of each layer, and you’re good to go. There’s even a spare GPIO pin broken out should you want to trigger something else on each layer of your print. Admittedly we can’t think of anything else right now that would make sense, other than some other type of camera, but we’re sure some creative folks out there could put this feature to use.

Currently, [Whopper Printing] is selling the LayerLapse as a finished product, though it does sound like a kit version is in the works. There’s also instructions for building a DIY version of the hardware using your microcontroller of choice. Whether you buy or build the hardware, the firmware is available under the MIT license for your tinkering pleasure.

Being hardware hackers, we appreciate the stand-alone nature of this solution. But if you’re already controlling your printer through OctoPrint, you’re probably better off just setting up one of the available time-lapse plugins.