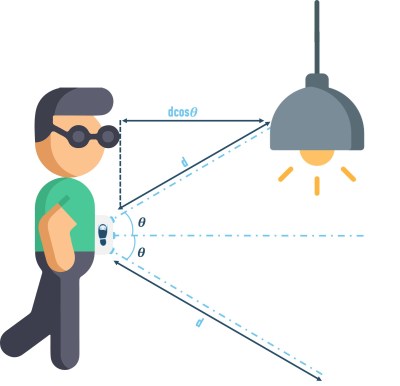

Conventional speakers work by moving air around to create sound, but tiny speakers that use ultrasonic frequencies to create pressure and generate sound opens some new doors, especially in terms of maximum achievable volume.

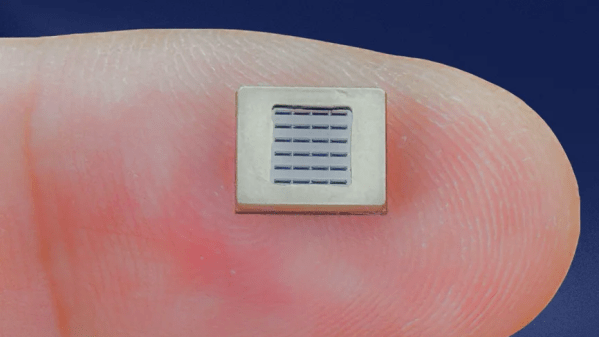

A new design boasts being the first 140 dB, full-range MEMS speaker. But that kind of volume potential has less to do with delivering music at an ear-splitting volume and more to do with performing truly effective noise cancellation even in a small device like earbuds. Cancelling out the jackhammers of the world requires parts able to really deliver a punch, especially in low frequencies. That’s something that’s not so easy to do in a tiny form factor. The new device is the Cypress, from MEMS speaker manufacturer xMEMS and samples are aiming to ship in June 2024.

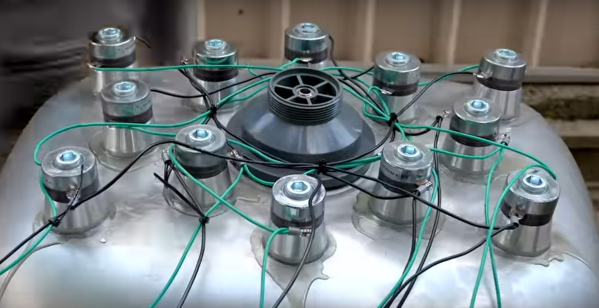

Combining ultrasonic waves to create audible sound is something we’ve seen show up in different ways, like using an array of transducers to focus sound like a laser beam. Another thing ultrasonics can do is cause sensors in complex electronics to become unhinged from reality and report false readings. Neato!