If you’re the happy owner of a vintage Apple system like a 1989 Macintosh IIci you may know the pain of keeping working monitors around. Unless it’s a genuine Apple-approved CRT with the proprietary DA-15-based video connector, you are going to need at least an adapter studded with DIP switches to connect it to other monitors. Yet as [Steve] recently found out, the Macintosh’s rather selective use of video synchronization signals causes quite a headache when you try to hook up a range of VGA-equipped LCD monitors. A possible solution? Extracting the sync signal using a Texas Instruments LM1881 video sync separator chip.

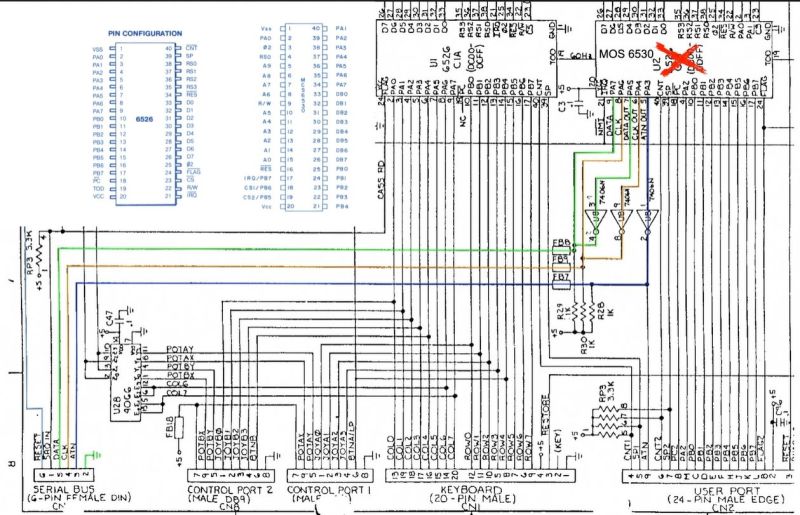

Much of this trouble comes from the way that these old Apple systems output the analog video signal, which goes far beyond the physical differences of the DA-15 versus the standard DE-15 D-subminiature connectors. Whereas the VGA standard defines the RGB signals along with a VSYNC and HSYNC signal, the Apple version can generate HSYNC, VSYC, but also CSYNC (composite sync). Which sync signal is generated depends on what value the system reads on the three sense pins on the DA-15 connector, as a kind of crude monitor ID.

Theoretically this should be easy to adapt to, you might think, but the curveball Apple throws here is that for the monitor ID that outputs both VSYNC and HSYNC you are limited to a fixed resolution of 640 x 870, which is not the desired 640 x 480. The obvious solution is then to target the one monitor configuration with this output resolution, and extract the CSYNC (and sync-on-green) signal which it outputs, so that it can be fudged into a more VGA-like sync signal. Incidentally, it seems that [Steve]’s older Dell 2001FP LCD monitor does support sync-on-green and CSYNC, whereas newer LCD monitors no longer list this as a feature, which is why now more than a passive adapter is needed.

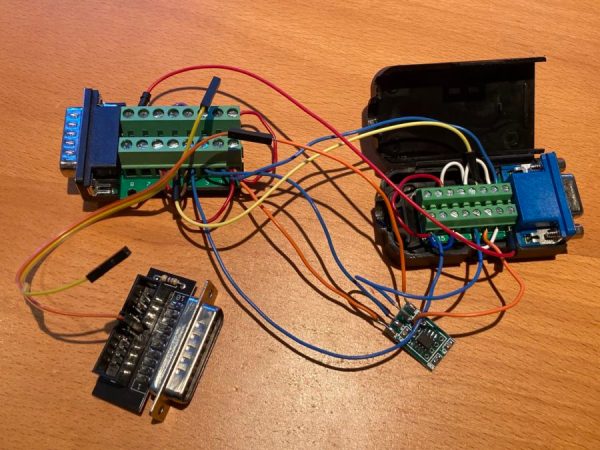

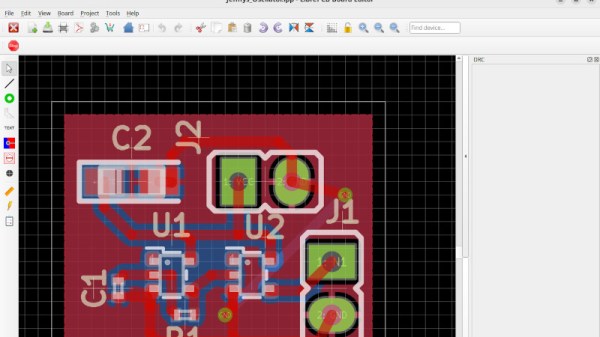

Although still a work-in-progress, so far [Steve] has managed to get an image on a number of these newer LCDs by using the LM1881 to extract CSYNC and obtain a VSYNC signal this way, while using the CSYNC as a sloppy HSYNC alternative. Other ICs also can generate an HSYNC signal from CSYNC, but those cost a bit more than the ~USD$3 LM1881.