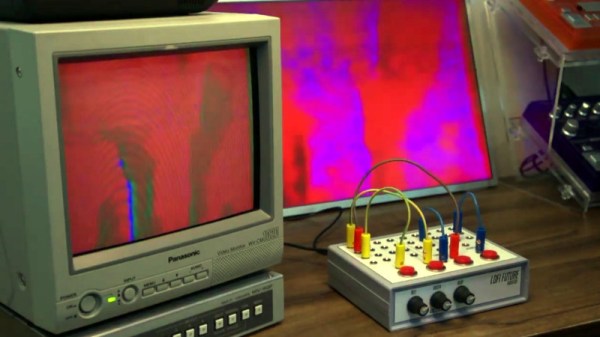

We’ve covered plenty of clocks powered by the ESP32, but this one from [Marcio Teixeira] is really something special. Rather than driving a traditional physical display, the microcontroller is instead generating a composite video signal of an animated digital clock. This could be fed into whatever device you wish, but given the 80’s synthwave style it’s pumping out, you’ll probably want to find a suitably retro CRT to do it justice.

Specifically this is a variant of the “Dali” clock, where each digit seems to melt and morph into its successor. Though his version doesn’t necessarily share code with all the previous iterations, [Marcio] does credit the developers who have pulled off similar visual tricks going all the way back to 1979. Given the vintage of this particular animation, the neon skyline and infinite scrolling grid certainly feel like a perfect fit.

Specifically this is a variant of the “Dali” clock, where each digit seems to melt and morph into its successor. Though his version doesn’t necessarily share code with all the previous iterations, [Marcio] does credit the developers who have pulled off similar visual tricks going all the way back to 1979. Given the vintage of this particular animation, the neon skyline and infinite scrolling grid certainly feel like a perfect fit.

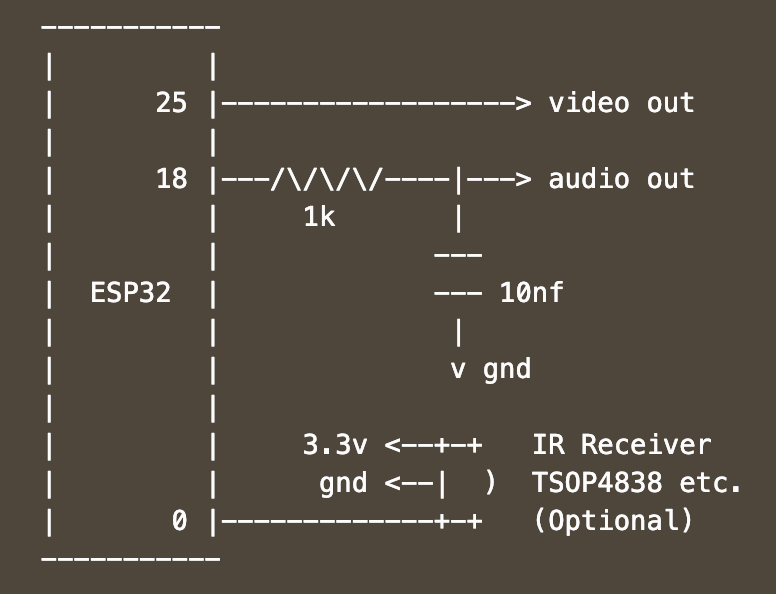

Want to add a little vaporwave vibe to your own workbench? Assuming you’ve already got a 80s style CRT, all you need is an ESP32 and two wires stuck into the composite video port. One goes to ground, and the other goes to the chip’s analog pin. Once everything is powered up, you’ll be able to configure the clock with a web-based interface. It doesn’t get much easier than that.

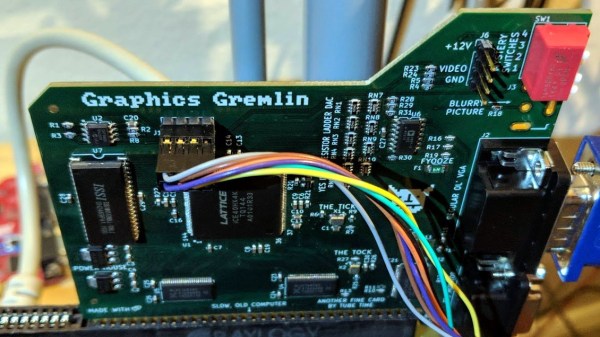

In the documentation, [Marcio] calls out a few open source projects which were instrumental to getting his clock off the ground. The pioneering work [bitluni] did to get video out of the ESP32 is something of a given, but he also sends a hat tip to [rossumur] for his collection of 8-bit game console emulators written for the microcontroller. Projects like this are a fantastic example of what’s possible when a community works together to truly push the envelope.

Continue reading “ESP32 Clock Pushes Outrun Graphics Over Composite”