The C-17 Globemaster III is a military cargo jet that can carry what their commercial counterparts can’t, to places those other planes can’t go. The people who keep these planes flying are proud of their capable airlifter, but it’s hard to show them off. Solution: build a scaled-down version more suitable for driving off base for a parade down Main Street and other community events.

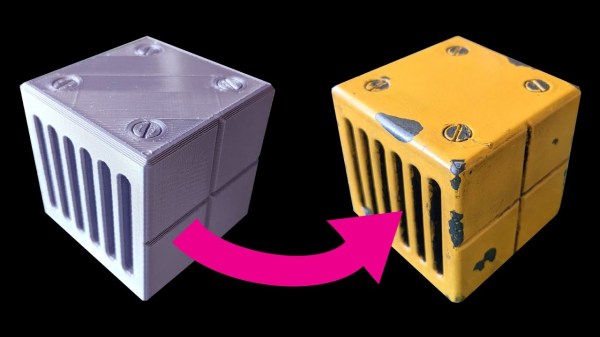

While the real thing was built under an expensive and contentious military procurement process, the miniature was built with volunteer labor using castoff materials. The volunteer force included maintenance crew whose job is to know the C-17 inside and out. Combined with fabrication skills that comes with the job, the impressive baby plane faithfully copied many curvatures and details from full-sized originals. (Albeit with some alteration for its cartoony proportions.) Underneath are mechanicals from a retired John Deere Gator utility vehicle. They usually resemble a large golf cart except with a cargo bed and more rugged suspension. Basically they are to golf carts as a C-17 is to a 767. Amusingly, the little plane has its own rear loading ramp, superficially preserving the cargo-carrying capacity of the original Gator chassis.

Interior features continue, though the official picture gallery doesn’t show them. There is a flight deck with control panels and various sights and sounds to keep visitors entertained. Enough details were poured into the exhibit that some people had to ask if the little plane can fly, and the answer is a very definite no. The wings, and the engine pods mounted to them, are only for show carrying The Spirit of Hope, Liberty & Freedom. It is quite a long official name for such a short stubby thing.

We always love to admire impressively put-together miniatures, and not all projects require skill of aircraft mechanics. Like this very approachable miniature forklift project. But there are plenty of other projects whose skills put us in awe, like this remote-control car powered by a miniature V-10 engine.

[via The Museum of Flight]