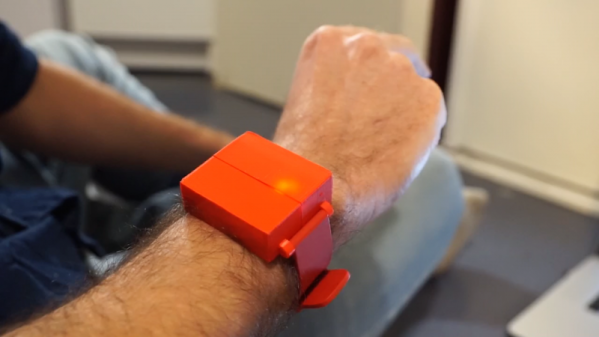

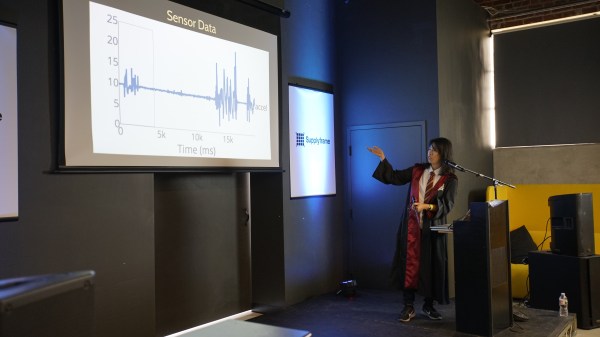

Jennifer Wang likes to dress up for cosplay and she’s a Harry Potter fan. Her wizarding skills are technological rather than magical but to the casual observer she’s managed to blur those lines. Having a lot of experience with different sensors, she decided to fuse all of this together to make a magic wand. The wand contains an inertial measurement unit (IMU) so it can detect gestures. Instead of hardcoding everything [Jennifer] used machine learning and presented her results at the Hackaday Superconference. Didn’t make it to Supercon? No worries, you can watch her talk on building IMU-based gesture recognition below, and grab the code from GitHub.

Naturally, we enjoyed seeing the technology parts of her project, and this is a great primer on applying machine learning to sensor data. But what we thought was really insightful was the discussions about the entire design lifecycle. Asking questions to scope the design space such as how much money can you spend, who will use the device, and where you will use it are often things we subconsciously answer but don’t make explicit. Failing to answer these questions at all increases the risk your project will fail or, at least, not be as successful as it could have been.

Continue reading “Magic Wand Learns Spells Through Machine Learning And An IMU”

Fifteen sensor boards, called K-Ceptors, are attached to various points on the body, each containing an LSM9DS1 IMU (Inertial Measurement Unit). The K-Ceptors are wired together while still allowing plenty of freedom to move around. Communication is via I2C to a Raspberry Pi. The Pi then sends the collected data over WiFi to a desktop machine. As you move around, a 3D model of a human figure follows in realtime, displayed on the desktop’s screen using Blender, a popular, free 3D modeling software. Of course, you can do something else with the data if you want, perhaps make a robot move? Check out the overview and the performance by a clearly experienced dancer putting the system through its paces in the video below.

Fifteen sensor boards, called K-Ceptors, are attached to various points on the body, each containing an LSM9DS1 IMU (Inertial Measurement Unit). The K-Ceptors are wired together while still allowing plenty of freedom to move around. Communication is via I2C to a Raspberry Pi. The Pi then sends the collected data over WiFi to a desktop machine. As you move around, a 3D model of a human figure follows in realtime, displayed on the desktop’s screen using Blender, a popular, free 3D modeling software. Of course, you can do something else with the data if you want, perhaps make a robot move? Check out the overview and the performance by a clearly experienced dancer putting the system through its paces in the video below.