[Mike Harrison] is known for incredibly tiny soldering. Now he’s claiming a “world’s smallest” in the form of a stand-alone LED blinker, and we think he’s got the record.

He brought it along with him to Friday’s Beagleboard Bring-a-Hack, and we got a close look at the diminutive assembly. The project was dreamed up when [Mike] saw an announcement from Seiko about a new supercapacitor in a tiny package (likely the CPH3225A giving the blinky a footprint of 3.2 x 2.5 mm). With that in hand he added a PIC 10f322 microcontroller in a SOT23 package, an 0603 smoothing capacitor, and an SMD LED.

With such a tiny package, the trickiest part is figuring out how to charge that supercap. [Mike] used a drill and hand files to make a square hole in a CR2032 battery holder to serve as a jig. The bottom of the supercap rests against the battery as a pogo pin makes the second connection to a terminal on the side of his assembly. It charges quickly and will happily blink away for about six minutes after charging.

Mike set out to make two of these, but dropped the second supercap when at his workbench to be forever lost in the detritus common to every electronics workshop. When he first pulled it out at the meetup we were on a rooftop terrace and we were more than a bit concerned that this would just blow away. How do you begin to fabricate such a tiny assembly? He used UV cured epoxy to glue them together first, then somehow completed the soldering by hand!

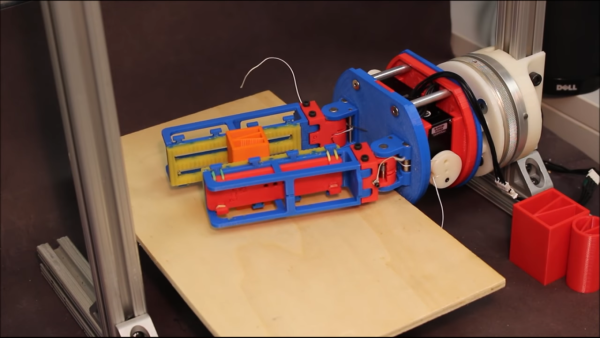

When first assembled the playfield is blank. That didn’t stop the fun for this set of kits stacked back to back for player vs. player action. There’s a hole at the top of playfields which makes this feel a bit like playing Pong in real life. However, where the kit really shines is in customizing your own game. In effect you’re setting up the most creative marble run you can imagine. This task was well demonstrated with cardboard, molded plastic packaging (which is normally landfill) cleverly placed, plus some noisemakers and lighting effects. The company has been working to gather up inspiration and examples for building out the machines. We love the multiple layers of engagement rolled into Pinbox, from building the stock kit, to fleshing out a playfield, and even to adding your own electronics for things like audio effects.

When first assembled the playfield is blank. That didn’t stop the fun for this set of kits stacked back to back for player vs. player action. There’s a hole at the top of playfields which makes this feel a bit like playing Pong in real life. However, where the kit really shines is in customizing your own game. In effect you’re setting up the most creative marble run you can imagine. This task was well demonstrated with cardboard, molded plastic packaging (which is normally landfill) cleverly placed, plus some noisemakers and lighting effects. The company has been working to gather up inspiration and examples for building out the machines. We love the multiple layers of engagement rolled into Pinbox, from building the stock kit, to fleshing out a playfield, and even to adding your own electronics for things like audio effects.