This particular story on researchers successfully making yeast-free pizza dough has been making the rounds. As usual with stories written from a scientific angle, it’s worth digging into the details for some interesting bits. We took a look at the actual research paper and there are a few curious details worth sharing. Turns out that this isn’t the first method for yeast-free baking that has been developed, but it is the first method to combine leavening and baking together for a result on par with traditional bread-making processes.

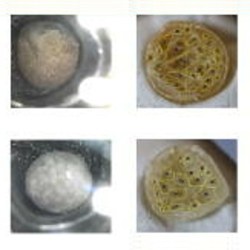

Basically, a dough consisting of water, flour, and salt go into a hot autoclave (the header image shows a piece of dough as seen through the viewing window.) The autoclave pressurizes, forcing gasses into the dough in a process similar to carbonating beverages. Pressure is then released in a controlled fashion while the dough bakes and solidifies, and careful tuning of this process is what controls how the bread turns out.

With the right heat and pressure curve, researchers created a pizza whose crust was not only pleasing and tasty, but with a quality comparable to traditional methods.

How this idea came about is interesting in itself. One of the researchers developed a new method for thermosetting polyurethane, and realized that bread and polyurethane have something in common: they both require a foaming (proofing in the case of bread) and curing (baking in the case of bread) process. Performing the two processes concurrently with the correct balance yields the best product: optimized thermal insulation in the case of polyurethane, and a tasty and texturally-pleasing result in the case of pizza dough. After that, it was just a matter of experimentation to find the right balance.

The pressures (up to 6 bar) and temperatures (145° Celsius) involved are even pretty mild, relatively speaking, which could bode well for home-based pizza experimenters.